Your head of customer success pings you: "Customer A is getting weird outputs for their answers. Not sure how common it is, but they mentioned it twice this week. Customer B also described something similar a few weeks back".

You know this situation. It's a special hell of LLM-based products: every quality issue arrives with perfect ambiguity. Is this a quick prompt tweak or a fundamental architecture problem? Should you drop everything to reproduce, or add it to the backlog? Will fixing it break what actually works well for your other customers?

Most teams handle this in one of two ways:

- Prioritize speed, test on a few synthetic examples, and deploy targeted patches

- Thoroughly gather examples, then build an eval and a comprehensive fix before shipping

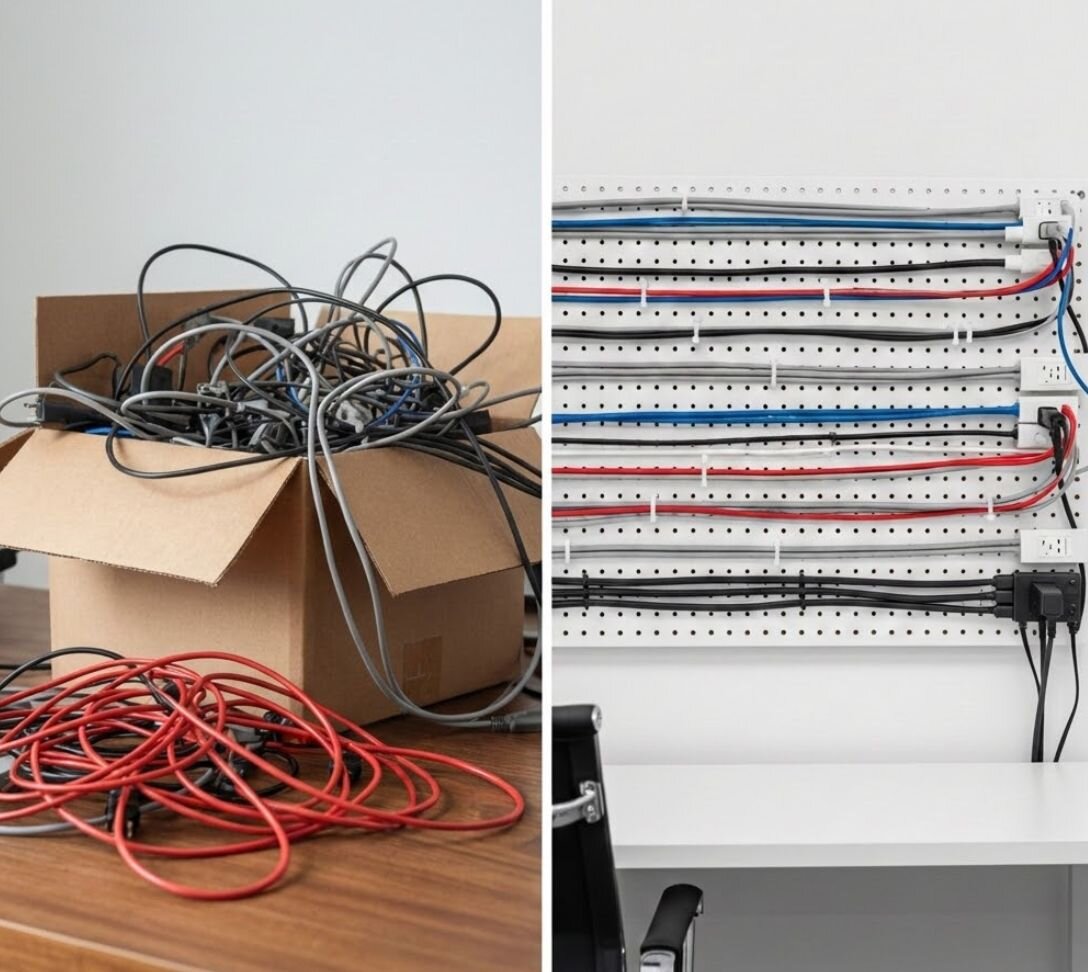

Both approaches have drawbacks, and trying to split the difference between them misses the actual problem. The real problem isn't choosing between fast and thorough. It's that you're solving problems before defining them properly.

Here's the key point: you should build the eval the moment you identify a quality issue, not when you decide to fix it.

Why we get trapped

Most teams treat evaluation creation and problem solving as part of a single process. Customer reports an issue → gather examples → build a comprehensive test suite → implement fix → ship when you hit 95% accuracy.

This bundles two activities that should be completely separated:

- Defining what's broken (evaluation)

- Fixing what's broken (solution)

When you work on them together while operating under customer pressure, you get stuck choosing between thorough evaluation (slow) and quick fixes (risky).

The separation principle

- A clear definition of the failure mode

- Baseline metrics on how often it occurs

- Representative test cases ready to validate against

- Historical context on whether it's getting worse

You're not scrambling to define the problem while customers wait for solutions. You're coming to stakeholders with clear data on the issue.

A real example

While working on an AI RFP response platform, we ran into a failure mode where the model would break character on longer multi-part questions. This was deeply problematic for our product and our customers, as RFPs were core to our customers’ sales efforts. These failures were far from subtle–both a blessing and a curse.

A common example of this error would look something like this:

"However, the context provided makes no direct mention of SOC2 compliance."

We named and defined the issue quickly as a "4th wall break": any time the model references itself or system internals like context, instructions, or internal limitations instead of behaving like a proposal writer.

We collected customer examples and spent a short time searching logs for additional instances. While investigating, we naturally hypothesized on root causes and fixes, but stayed focused on the examples.

Once we felt confident that we had our examples, we built the eval. Lucky for us, a simple regex search could capture over 95% of this failure mode. We built a pass/fail eval that searched for a list of banned phrases, then ran it once on our production data to establish a baseline.

Only after this did we start trying to fix the issue. By the time we touched any prompts, we already knew exactly what we were solving for and had a way to prove it worked.

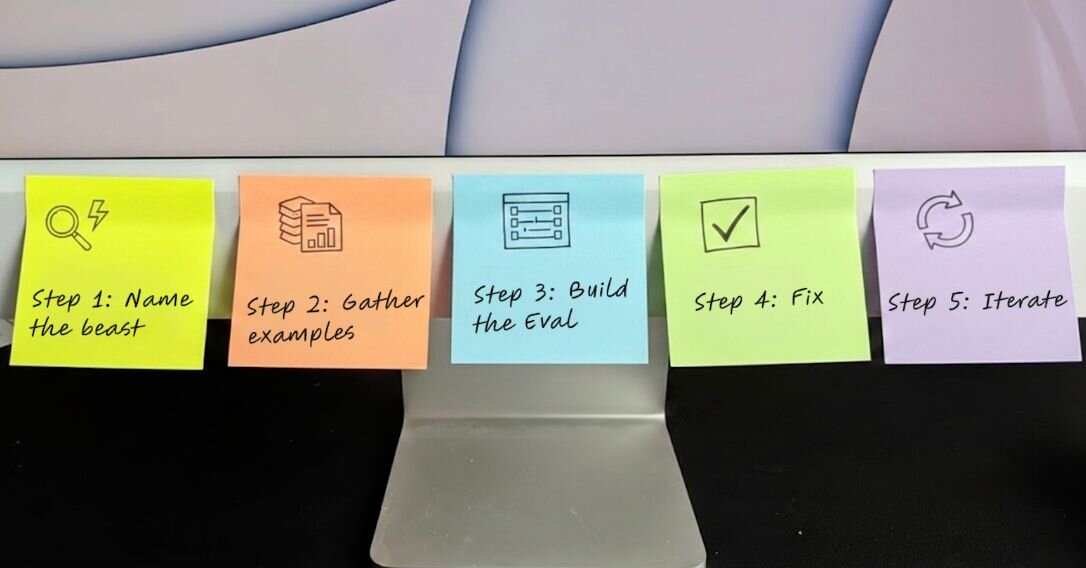

Using the immediate eval method

Step 1: Name the beast

A problem must be identified before it can be solved, and it must be named before it can be prioritized. Issues start with a vague complaint like "it remembers my company name wrong". Once they occur frequently enough to form a pattern, give them a name–something specific and descriptive that your team can rally around.

Do enough investigation to get specific, for example, "Incorrect company name when RAG pulls from previous answers." If you discover you need to change the name later, then do it. A well-named problem organizes your team, focuses debugging efforts, and makes prioritization conversations concrete instead of abstract.

Step 2: Gather examples (20 minutes max)

Start with what you have: collect every example customers provided, then spend 20 minutes searching your logs for other unreported instances of the error. The goal here is to build a useful dataset of bad outputs, so when you’re ready to start fixing, you have something to directly test against.

Step 3: Build the eval

You can describe the error, and you have a set of examples of where it comes up. Now create a simple pass/fail eval. This could be a programmatic rule, a regex pattern, or an LLM-as-judge, but keep it binary. Speed is more important for now–you can always refine things later.

With your eval written, you can run it against your historical production data and get a sense of how widespread the problem is. Here is where we reach a decision gate of whether or not to fix now.

Step 4: Fix (when ready)

If the fix needs to go out immediately, your eval has already generated a dataset to test against. You'll move quickly because you're not building the eval and the fix at the same time.

If an immediate fix isn't required, add the eval to your quality dashboard and let it collect data. You now have a named, measurable quality issue that you can track over time and prioritize systematically. When business priority, engineering capacity, and customer impact align, you'll be ready to act—with weeks or months of data and a proven framework that catches the issue.

Step 5: Iterate

Your first eval is your smallest test, not your final one. As you learn about new edge cases, different failure conditions, and customer feedback, you'll refine it further. Your goal in these 20 minutes isn’t perfect coverage, but to ship the smallest useful check.

Getting started

Don't wait for your next crisis. Look at your last three customer quality complaints. Can you name each failure mode specifically? Do you have simple pass/fail checks for them? Do you know their baseline occurrence rates?

If not, spend 20 minutes on each one right now. Build the evals before you need them.

Three months of this practice will give you a comprehensive quality dashboard built from real customer pain points, not theoretical edge cases. When leadership asks "what should we prioritize?" you have data, not opinions.

Separate the concerns. Build evals immediately. Fix when ready.

Your future self, and your customers, will thank you.