We all know that pain. Three months of engineering and tickets followed by nothing but silence. The analytics of a new feature or product are far from kind: users don’t need it, don’t understand it, and frankly, don’t care. The after-the-fact analysis boils down to “we should’ve validated this earlier.”

But until recently, validation was an expensive and time-consuming process of research, prototyping, and “minimum viable products.” What I’ve observed throughout my eight years of experience in fintech, edtech, and healthcare startups is that the traditional MVP, minimum viable product, and strategy force you to build before you really know. We’ve committed your engineers’ time before we’ve even answered whether anyone cares about what we’re making.

I call this painful paradox the “ticket problem.” You need validation before you build, but in order to get validation, you need to build something. Lucky for us, this paradox is melting away in favour of AI.

AI-driven validation (with data at its core) marks a radical departure from the traditional manner in which product teams handle new ideas. Teams are finally able to validate key hypotheses, simulate mock-ups, and capture insightful feedback without ever typing one bit of production code.

The AI validation toolkit

What we can accomplish with AI today would’ve required an entire department just five years ago. LLMs allow product teams to take their vague product gut and, in minutes, turn it into something structured and testable. Where hours of whiteboard meetings could’ve been wasted debating what the teams are betting on, they can now ask an AI to examine their idea and find the three riskiest assumptions. This can be accomplished by something as straightforward as “I’d like to add a social share feature to my financial planning application. What are my three riskiest assumptions regarding user behaviour?”

The testing of value propositions can be made dramatically more efficient with generative AI. Instead of agonising over what makes the perfect headline or value prop, teams can quickly come up with twenty different versions in twenty minutes. The potential value prop, “Take control of your financial future,” can be pitted against “Join 50,000 people saving smarter” without ever having to decide what you’re going to build.

Visualisation prototyping has seen perhaps the strongest evolution. AI can produce high-fidelity prototypes from text descriptions. Questions like “Please provide a mockup of a mobile banking page featuring immediate peer-to-peer transactions, focusing on security and speed” yield several design options at once. A/B test designs with various colour schemes, icons, or layouts can be created with enough time to schedule a design kick-off today.

But perhaps most interesting, AI lets teams simulate user feedback, which, although not a substitute for real users, can significantly enhance the value of their questions even before they go live with actual participants. Teams can build their own personas by uploading their user research and then having AI simulate their motivations, pain points, and world context. Such personas allow teams to think harder about who they are making products for.

A framework for validation without tickets

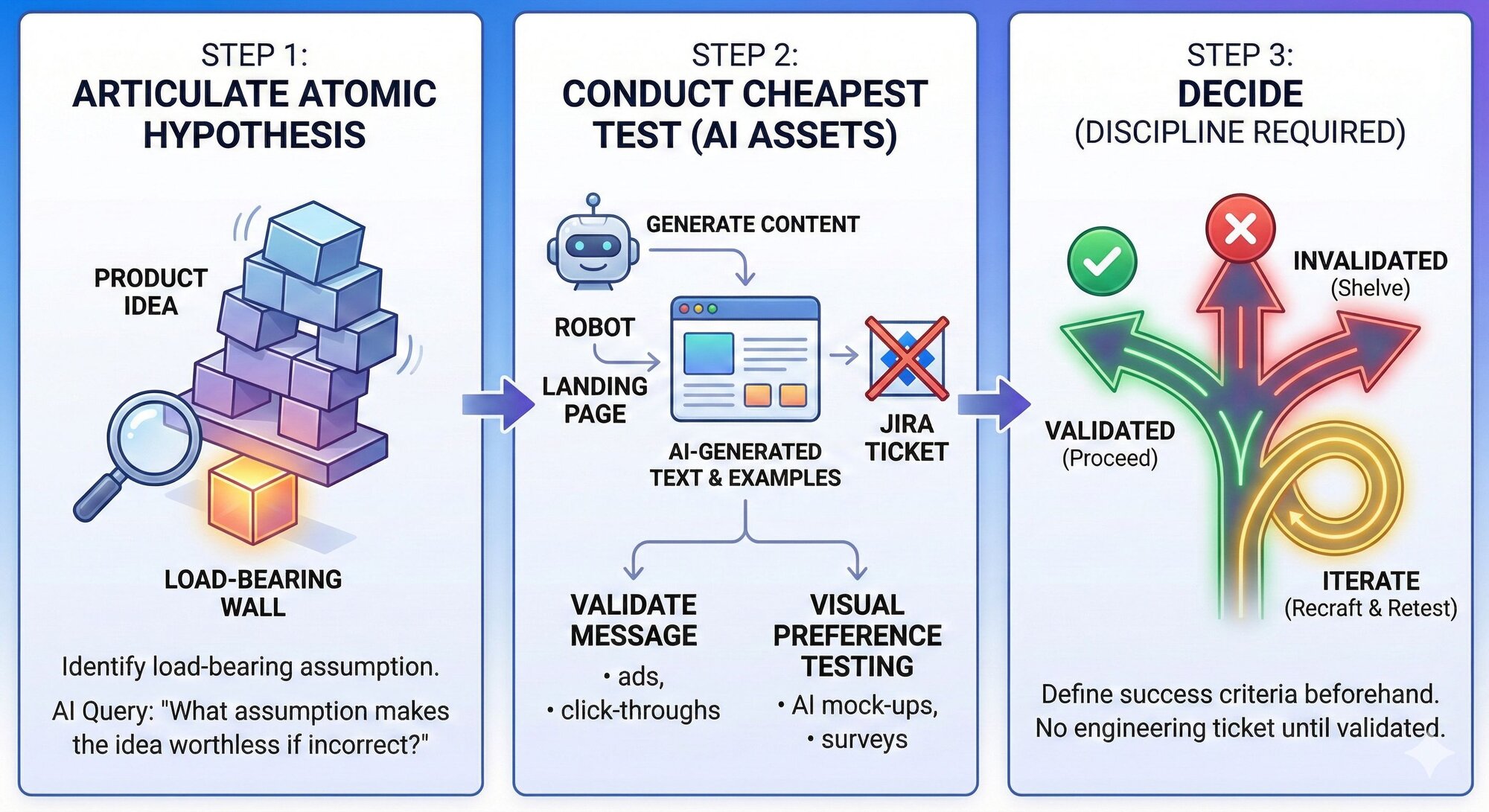

A data-first approach is the most effective if broken down into a rigorous three-step approach.

Step one

Articulate what I call “the atomic hypothesis”, the assumption that is most at risk with a product idea. All new products and new product ideas are based on lots and lots of hypotheses, but there’s one hypothesis that’s the load-bearing wall. If it’s incorrect, then all of those others are irrelevant.

An AI can be used to identify this by presenting a product idea and then querying it with, “What’s the assumption that, if it’s incorrect, makes the whole idea, this whole concept, worthless?”

“Small business owners trust AI-generated content enough to post it publicly without significant editing.” This is quite specific. It’s not “Small business owners need scheduling services,” which is likely true but not particularly interesting, or “AI can produce good enough content,” which has already been confirmed and is not necessarily part of what you’re offering. Here’s where your Unique Value Proposition meets actual user behaviours, and you can measure it, whether business owners are modifying their AI-generated content slightly, heavily, or at all.

Step two

The second step is to conduct the cheapest test of this hypothesis possible with AI-generated assets. This is where the “no ticket” principle starts to kick in. If your hypothesis is about trust in AI-generated assets, you’re not going to need to build out the scheduling system, the payment processing, and so on. All you need to figure out is if folks will be interested in AI-generated social media posts.

Build out a simple landing page with AI-generated text talking about this feature. Generate some examples of what your social media posts might be with AI, and then you can run some very cheap ads to small business owners and see if they will click through and, importantly, if they will be interested in this concept when you give them some examples.

I’ve seen teams get hung up on designing buttons when they’ve never validated that anyone cares about what’s behind the button. AI allows you to validate the message, does this value statement resonate?, before you spend time and effort on the medium itself. The value statement test may run £200 and take three days. This pales in comparison with designing something and then realising, after an entire two weeks of designing and four weeks of engineering, that users don’t really want it.

Visual preference testing follows a similar pattern. Using mock-ups of various design approaches created by AI, you can accomplish quick preference testing with small business owners by conducting mini-focus groups with basic online surveying tools. Present small business owners with three approaches to reviewing AI-created content before its posting, perhaps by side-by-side comparison, inline editing, or swipe and approve approaches, and design your next move based on their preferences.

Step three

The third step is to decide if you’ve validated, invalidated, or need to iterate on your hypothesis. This takes discipline, and this type of discipline can’t be solved with AI. Before you execute your test, define your success criteria. What does it mean if fewer than 15% of small business owners who visit your concept landing page give you their email address?

What if 60% give you their email address, but then in subsequent feedback questionnaires, they raise worries about brand voice inconsistency?

The “no ticket” rule applies here with particular vehemence. Ultimately, if your hypothesis doesn’t check out with your data, you either need to shelve your concept or, if you really love it, dramatically recraft it before ever creating an engineering ticket.

From theory to practice

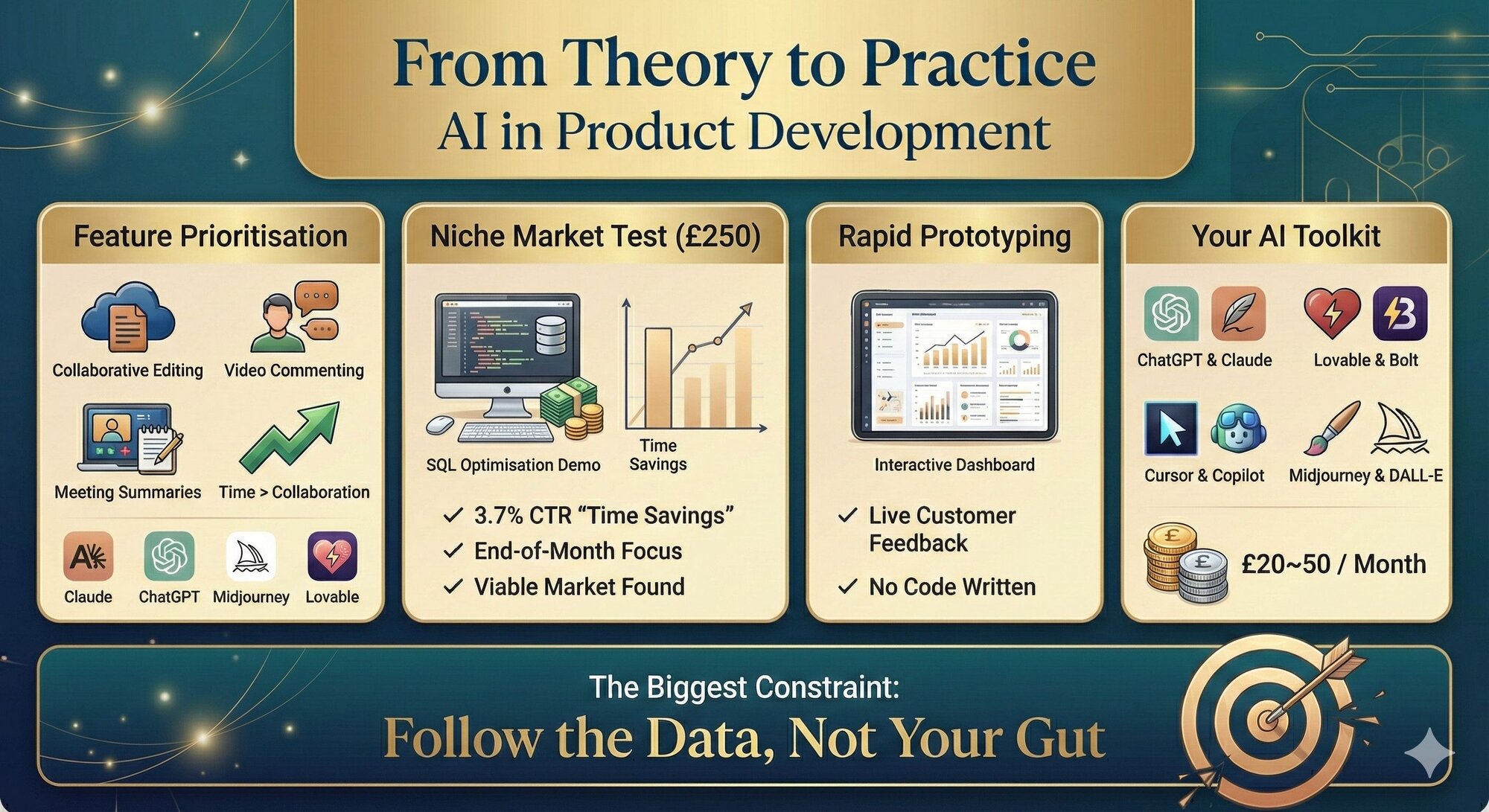

Feature prioritisation is the first shift. When teams face multiple seemingly equal options, AI enables them to test value propositions in the open. Language models help generate positioning and personas, image tools create visual concepts, and vibe-coding platforms turn ideas into real landing pages within minutes. Instead of arguing internally, teams observe what users actually engage with, revealing, in this case, that time savings mattered far more than collaboration mechanics.

You need to understand how AI enables market validation without building products. By generating technical narratives and interactive demos, teams can test whether a niche audience exists before committing engineering effort. Small, targeted campaigns expose which messages resonate and which assumptions are wrong, often uncovering deeper pain points than initially expected.

Rapid prototyping is another key transition shown here. Interactive dashboards and interfaces can now be generated from plain-language descriptions and shared directly with customers. Feedback on structure, priorities, and usability arrives before production code is written, dramatically reducing waste and rework.

Finally, the image summarises the minimal toolkit required. A combination of large language models, vibe-coding tools, developer accelerators, image generators, and basic analytics is enough to run meaningful experiments. The cost is low, the speed is high, and the constraint is no longer technology, but whether teams are willing to follow the data where it leads.

Changing the product paradigm

Data-driven validation with AI is not only an evolution but a revolution in product development, and it overturns values entirely. The traditional product development cycle of learn, build, and launch is turned upside down, and it is learn, validate, and then build. This reversal has huge strategic implications. The engineering groups can concentrate on amplifying what works and not spend time fixing what may or may not work. The product managers need not spend time explaining what went wrong with something and focus entirely on leveraging what has worked.

This pattern across various sectors shows that those organisations practising early-stage validation are outperforming others that are not validating. At Viamo, we were reaching millions of users in emerging markets. The impact of each and every feature decision was enormous. We simply could not afford to make an incorrect guess, so we became obsessed with validating prior to committing. AI would have dramatically shortened our validation cycles. The lesson here is that all organisations doing it right accept that validating an idea can be more valuable when it’s shown to be incorrect rather than when it’s shown to be right.

The problem with product teams is not that they can’t get their hands on these AI capabilities, as they’re widely available and becoming ever more affordable. No, it’s a matter of culture. It’s about convincing people that their favourite feature idea has to be validated before it can be built. It’s about understanding that most ideas will fail in early validation, and that’s okay; it helps to prevent disasters down the road.

This week, consider what your next major product decision will be. Before you open Jira or even begin writing up your specification, take sixty minutes to apply AI to check your atomic hypothesis. Write copy variations. Design mockup variations. Conduct your micro-test. The insights you discover may get your team out of months of development down the wrong path, or it may confirm you’re discovering something authentic and worthwhile. Regardless, you’ll make this determination before creating your first ticket.

Keep reading

Why agentic AI forces a 'reverse-waterfall' in product management

Designing safe conversational AI: The risks product managers overlook

Surviving the perfect storm: How hardware PMs can beat the AI tax and trade tariffs

From repetitive work to real impact: A case study on building an AI recommendation for developers