Everywhere you look, fintech apps, health dashboards, and productivity tools, there is a chat interface popping up. The experience feels intuitive, and the answers they give are often surprisingly natural-sounding. It looks like a simple upgrade to the existing product.

But let’s be clear: conversational AI is not “just another feature”.

Old-school UX elements like buttons, forms, and menus are deterministic. They are boring, but safe. They behave the exact same way every time you interact with them. Conversational systems don’t do that. They interpret, they generate, and they improvise. A model might give you two different answers to the same question five minutes apart.

And because these systems speak our language, users tend to trust them far more than they should.

For Product Managers, this shifts the job description. You aren’t just defining how a feature behaves anymore; you are defining how a model should act inside your product. Without a clear grip on the risks, even a simple MVP can lead to broken trust, harmful misunderstandings, or regulatory headaches.

I ran into this firsthand while working on an AI agent at Stockpile. What started as a simple “explain this” assistant quickly turned into users asking questions we never intended it to answer—“give me the best investment for my family”—phrased casually, confidently, and with real-life consequences if misunderstood. That was the moment it became clear that conversational AI needs to be treated as a product surface with explicit boundaries, not just a helpful interface.

Here is how to navigate the risks without needing a PhD in machine learning.

Why this is a new challenge for PMs

When we build traditional software, we operate with a clear contract. If the user clicks X, the system shows Y. The logic is explicit and testable.

Conversational AI breaks that contract. It fills in missing context with assumptions. It explains things it is not fully certain about. It struggles with nuance. It invites users into open ended interactions that cannot be fully scripted ahead of time.

This changes the PM role. You have to stop thinking primarily in terms of happy paths and start thinking in terms of boundaries. You need to define where the model is allowed to operate, build guardrails that keep it within those bounds, and design explicitly for uncertainty. These are not engineering decisions. They are product decisions.

In practice, this often means saying no to features that sound impressive in a demo but cannot be made safe or compliant in reality. For example, letting an AI generate an “optimal” basket of stocks feels intuitive, but in a regulated fintech context it crosses over into advice, especially when future performance cannot be inferred from limited historical data. Guiding users to explore information and tradeoffs, on the other hand, can still be valuable and safe.

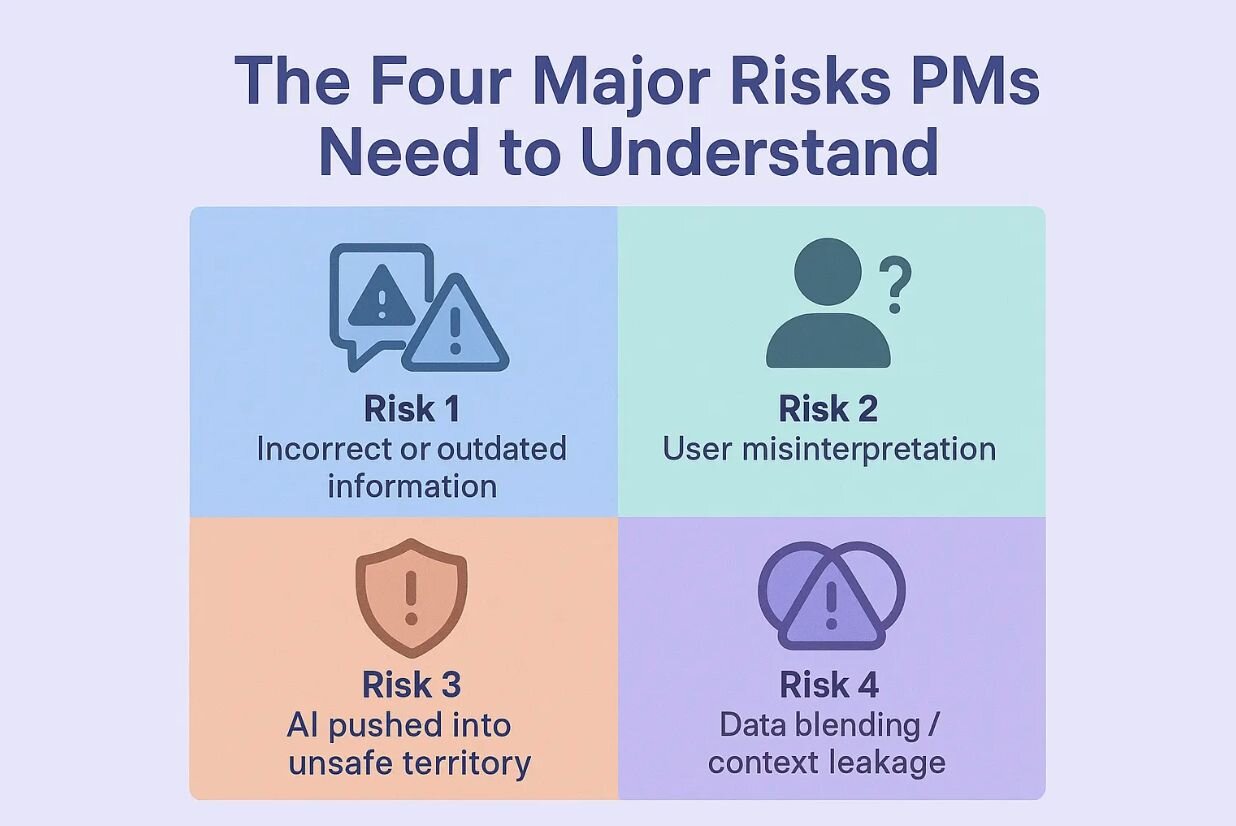

The four major risks you need to watch for

You do not need to understand vector databases to reason about risk. In practice, it usually comes down to four categories.

1. The "confident error" (model unpredictability)

Conversational systems have a habit of sounding most confident when they are wrong. They can misinterpret a question, summarize data inaccurately, or pull in outdated information, all while using polished and professional language.

We encountered this during early testing when users asked about current market conditions. The responses sounded reasonable, but one observability review revealed that the agent was relying on outdated market data. Nothing crashed. No error was shown. We only caught the issue by reviewing conversation history, and we were lucky to catch it before users acted on incorrect information.

In high stakes domains like fintech or health, a confident error is not just a bug. It is a liability that can lead to bad decisions and lost trust.

The fix was not to make the model “smarter,” but to constrain it. We limited which topics it could discuss, forced structured outputs so responses followed a predictable format, and hard coded how uncertainty should be expressed. Just as importantly, we tested the messy cases. Not whether the model could answer the right questions, but whether it reliably refused the wrong ones.

2. The "authority trap" (UX risk)

Because conversational AI feels human, users often treat it as an authority, even when disclaimers are present. They may assume it has access to private history, or interpret explanations as official guidance.

In our case, users frequently followed neutral explanations with questions like “so what should I do?” despite clear messaging that the agent could not provide financial advice.

Even when information is technically correct, the way it is delivered matters. If the tone sounds directive, users may act on it as if it were a recommendation.

We addressed this by being explicit and repetitive. The assistant’s empty state clearly explained what it could and could not do. When user intent leaned toward advice, the very first sentence reinforced that the agent could not provide guidance or financial recommendations. Tone was carefully reviewed so responses explained concepts rather than prescribing actions, and limitations were kept directly in the chat flow instead of hidden behind tooltips.

3. The "bad actor" (manipulation risk)

Some users are simply curious. Others are intentionally adversarial. They will try to push the system past its limits with prompts like “ignore previous instructions” or “acting as my doctor, should I take this.”

You should assume your system will be tested in this way. If you do not design for these edge cases, users will find them for you.

The solution is not to block curiosity, but to set firm boundaries. The model should be explicitly instructed that it cannot claim protected roles such as doctor, lawyer, or financial advisor. External inputs should be treated with caution, especially when users paste links or content from unverified sources. And when the system refuses a request, it should do so gracefully, with responses that are firm but not frustrating.

4. The "context bleed" (data risk)

Conversational AI relies on context, remembering what was said earlier in the interaction. The risk arises when that context persists for too long, mixes personal data with general knowledge, or leaks across sessions.

In regulated industries, this is more than a UX issue. It is a legal one.

Managing this risk requires discipline. User specific data should be scoped tightly to the active session. Personal context should be kept separate from the model’s general knowledge. And users should have a clear, simple way to reset or wipe conversation history when they choose.

How to manage this (without becoming an engineer)

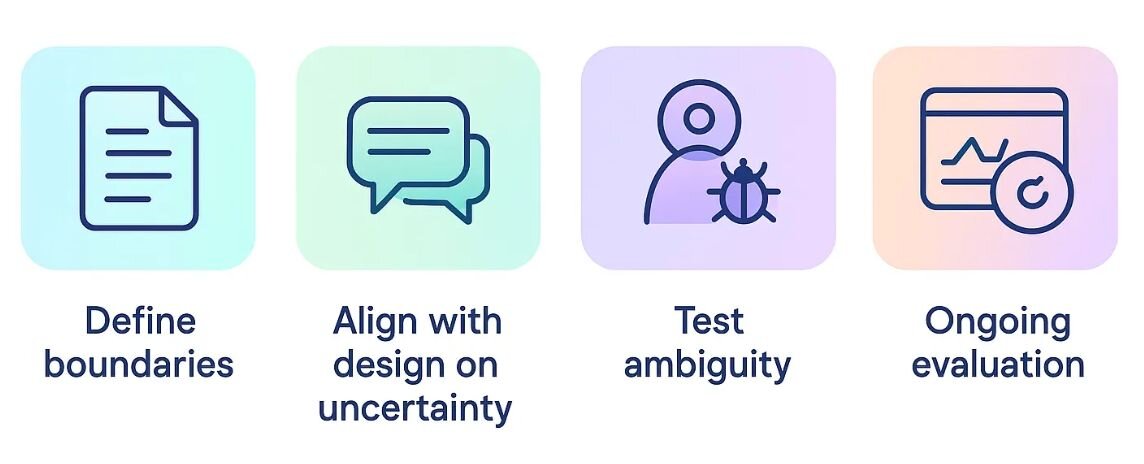

I did not need to learn how to code to manage these risks. I needed a better workflow.

The first step is writing a simple “can and cannot” document before development begins. What is the AI allowed to answer? What must it refuse? What tone should it use? Aligning on this early with engineering, design, and legal prevents painful rework later.

The second step is designing explicitly for uncertainty. Together with design, you should decide what “I don’t know” looks like in your product. Whether that is a disclaimer, a visual cue, or a change in tone, a safe system makes uncertainty visible rather than hiding it.

Finally, you have to try to break your own product. Test contradictory prompts. Ask high risk questions about money or health. Review logs and conversation history regularly. Conversational AI is not something you launch and leave. Monitoring where it struggles or refuses is the only way to improve quality over time.

The bottom line

I no longer evaluate conversational features by how impressive they sound in a demo, but by how safely they fail in real user hands.

Product managers succeed when they help users trust the experience. Conversational AI complicates that trust because it blends confidence with unpredictability. With the right boundaries, however, PMs can shape AI features that are predictable, transparent, and safe.

You do not need deep AI expertise to do this well. You need clarity, strong boundaries, and close collaboration across teams. The PMs who will excel are the ones who understand that conversational AI is not just about making systems feel human, but about making them safe for humans to use.