For the last decade, the implicit contract in SaaS product management was simple: the system retrieves, and the user reasons.

If a user wanted to investigate product returns, they would navigate to a "returns dashboard." The system displayed charts and tables, and the user did the cognitive heavy lifting - reading comments, correlating dates with holidays, and deciding whether to call the vendor. We built "retrieval products." Collaboration between app PMs (who own the user experience) and platform PMs (who own the underlying capabilities) was relatively straightforward: The app PM defined the query, and the Platform PM optimized the pipeline for speed and availability.

But with the emergence of agentic AI, that contract has flipped. Users are no longer asking for reports; they are seeking outcomes. They aren't asking "show me the return data." They are asking:

“How many returns of product X from vendor Y occurred in the two weeks after Thanksgiving, and what are the top five reasons for return?”

This shift from retrieval to action breaks our traditional organizational silos. When the product is expected to reason, decide, and act, the horizontal handoffs between "app" and "platform" do not work anymore. To build successful agents, we need to fundamentally re-architect how we collaborate.

The shift from retrieval to action

For years, the fundamental dynamic between a product and its user was clear: we provide the raw information, and they perform the synthesis. In the "retrieval era," a user’s success depended on their ability to stitch together information. If they searched for "returns Q4," our job was done once the table loaded. The cognitive load of reading customer comments, identifying a trend with a specific vendor, and drafting an email to the supply chain team sat entirely with the user.

In the "agentic era," that dynamic has inverted. The user is no longer paying for the tool; they are paying for the outcome. They do not want to see the returns table; they want to know what to do about it. The user intent has shifted from "Find this file" to "summarize the return reasons for product X and draft an email to vendor Y." If our product only fetches the file, we have not just missed a feature - we have failed the job to be done. We are no longer building passive tools that wait for input; we are building active agents that drive outcomes.

The evolution of platform requirements (the knowledge PM view)

Before exploring how platform needs have evolved, it’s essential to first define the platform's scope, otherwise the concept becomes overly broad. We will focus specifically on the knowledge layer as a dedicated platform component within the agentic AI ecosystem.

Traditionally, a platform’s core purpose was efficiency: providing the services, tools and infrastructure that allowed teams to build software faster. As businesses adopt AI agents, platforms are undergoing a fundamental shift - from systems that primarily retrieve information to systems that reason and decide.

This transition changes the platform from a "library" (where you store files) to a "context engine" (where you store meaning). Unlike conventional data platforms that just need to be fast and available, an agentic platform must be semantically accurate. If the platform cannot distinguish between "product X" (the item) and "product X" (a legacy SKU code), the agent will hallucinate. The platform’s job is no longer just to serve data; its job is to provide the "ground truth" that keeps the agent sane.

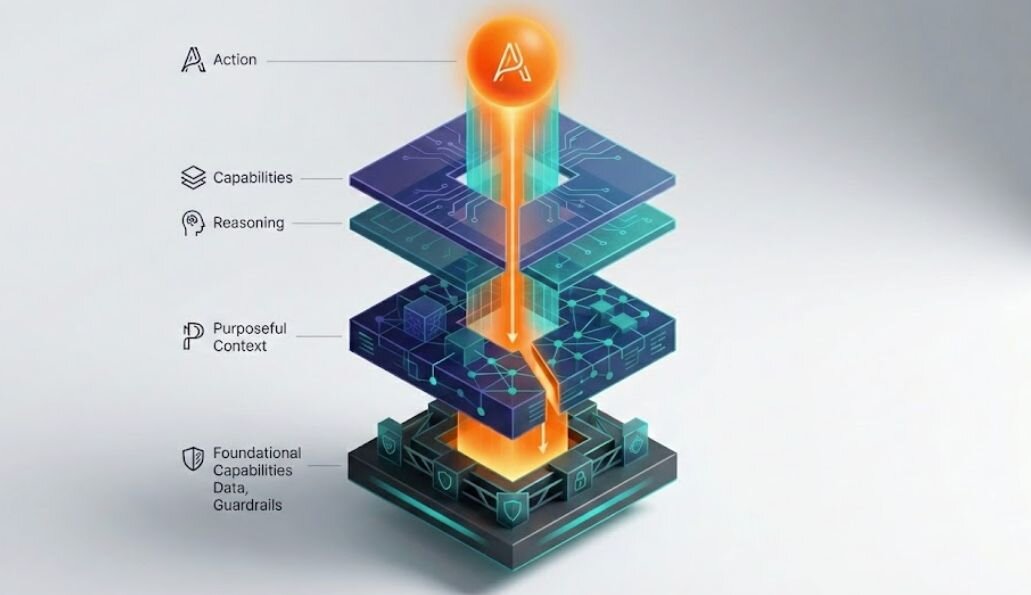

The framework (the 3-layer stack)

To operationalize this new reality, we need a shared vocabulary. We have simplified the agentic product stack into three distinct layers. In our experience, friction occurs when PMs operate as if these layers are independent silos, rather than parts of a single nervous system.

Layer 1: The app (value definition)

This is where the user lives. It is the layer of intent. In the past, the app layer was just a UI - a collection of buttons and forms that users interact with. In an agentic world, the app layer is the interface that captures the user's intent. It records the value the user seeks.

- The job: To capture the outcome (e.g., "fix the inventory issue with vendor Y") rather than just the input (e.g., "filter table by vendor Y").

Layer 2: The agents (the decision maker)

If the app is the interface, the agent is the employee. This layer sits immediately behind the user interface. It is responsible for taking the intent from layer 1 and reasoning through the steps required to achieve it.

- The job: To orchestrate the logic. For our returns use case, the agent must break down the user's question into specific steps: Query transaction DB for dates, read unstructured customer comment logs, cluster semantic reasons, and generate a summary. However, a decision-maker is only as good as the information it has access to - which is where it relies entirely on the layer below.

Layer 3: The knowledge (the context engine)

To an agent, knowledge is oxygen. While base models like ChatGPT or Gemini arrive trained on general world data, they are blind to your reality. This layer bridges that gap. It is not just a storage unit; it is a refined, queryable ecosystem that transforms raw enterprise data into the specific, retrieval-ready truths your agents need.

- The job: To provide deeper context. While organizations rush to fund AI strategy and slick dashboards, they often quietly skip the boring foundational work of data quality and definitions. But AI does not fail at the top; it fails because the bottom - the knowledge layer - was never finished.

The friction: The silo effect

The most common failure mode we see is not technical - it is organizational. When the App PM (owning layers 1 & 2) and the platform PM, also referred as knowledge PM (owning Layer 3) work in silos, they optimize for different things, leading to a broken product.

The value delivery gap (app PM perspective)

While building a learning platform, we initially focused on "coverage." We aimed to index every tutorial and feature in our ecosystem to ensure we had a massive volume of content. However, we realized that users did not care about the breadth of the catalog. They cared about the depth of their specific needs, such as "removing a background." If the knowledge is not structured to answer that one specific question, the largest library in the world is still useless to the user.

The biggest risk in agentic AI is not that the code fails; it is that the value fails. This often manifests as a "value delivery gap" where layer 3 optimizes for metrics that do not actually help Layer 1.

Consider our example: “What are the top five reasons for return?”

To deliver this, the knowledge team must optimize for depth - ensuring the agent specifically understands what constitutes a "return reason" inside a messy paragraph of customer text. However, if the knowledge team works in a silo, they might optimize for breadth - ingesting every file in the company to say they have "100% coverage." You end up with a powerful database that creates a useless agent. The user does not care that you indexed a million files; they care that the agent missed the nuance of why the product was returned.

The hidden complexity (knowledge PM perspective)

We have seen the "iceberg" problem firsthand in platform development. As we moved into late 2025, it became clear that real transformation requires fixing what lies beneath the surface. We spent time addressing broken data pipelines and resolving years of integration debt. We had to document business terms that were previously just tribal knowledge. AI is not a magic fix for legacy code. It is a spotlight that exposes the cracks in your foundation. If you do not fix the infrastructure underneath, your agentic strategy will eventually sink.

Let us start with a typical enterprise reality. The knowledge PM is often responsible for legacy systems built over many years, data pipelines with unclear ownership, and accumulated technical debt.

When an app PM asks for "returns data," they see a simple request. The knowledge PM sees the mess underneath: three different ERP systems, unstructured text logs that haven't been cleaned in five years, and inconsistent vendor IDs. Terrified of this complexity, Knowledge PMs frequently default to a technology-first mindset - focusing on building a generic, robust data lake first ("Let's get all the data in one place!") rather than solving the specific problem ("Let's just clean the vendor Y data"). This leads to generic platforms that are technically impressive but fail to support the agent's specific reasoning needs. The aim isn’t to build an overly broad platform or a use‑case‑specific one; the right solution sits in the middle and depends on better organizational collaboration.

The solution: The "reverse-waterfall" collaboration

Solving this requires inverting the roadmap. We call this the "reverse-waterfall" or "action-first" approach.

In a traditional waterfall, you start with the data you have ("what's in the DB?") and build up to the UI. In agentic AI, you must start with the action the agent must take and force that requirement down through the stack.

Step 1: Define the capabilities (top-sown)

My requirements used to be lists of data fields - title, duration, skill level etc. Now, I define capabilities. I ask: "Can the agent distinguish between a user who is technically stuck and one who is looking for creative inspiration?" The requirement is no longer the data itself. It is the agent's ability to reason through the user’s specific hurdle.

We stop asking, "What data is available?" and start asking, "What high-value capabilities must the agent possess?"

In our "reverse-waterfall" model, the app PM defines the target capabilities first. This is not about building one disjointed use case at a time. It is about identifying the core action patterns the agent needs.

For our returns example, the app PM does not just ask for a "returns API." They define the reasoning capability:

"The agent must be able to correlate structured transaction counts (how many?) with unstructured customer sentiment (why?) for specific vendors."

This specific reasoning requirement becomes the mandate for the entire stack, and can be demonstrated with multiple examples or use case scenarios.

Step 2: Build purposeful context layers (bottom-Up support)

On the platform side, the key challenge is balancing foundational investment with specific value delivery. Instead of building a generic "data lake," the knowledge PM builds a purposeful context layer that vertically integrates knowledge for the capability defined in step 1.

To support the "product returns" agent, the knowledge PM’s roadmap shifts to prioritize:

- Metadata ingestion: unifying "product" and “vendor” definitions, across systems.

- Transactional connections: linking orders to returns.

- Code indexing: allowing the agent to understand business logic stored in SQL.

Building this purposeful layer requires integrating these sources into a knowledge layer that is lean, relevant, and query-ready for agents. By focusing on the agent's need to reason, the platform PM avoids over-engineering generic tools and instead builds a purpose-built context engine that actually solves the user's problem.

At the same time, the platform must include strong foundational capabilities - such as security, privacy, AI ethics, regulatory compliance, and clear monitoring and guardrails for latency, cost, response times, and SLAs - to scale reliably and operate consistently. A platform that delivers accurate results too slowly or at a cost higher than the value it creates ultimately fails to deliver real ROI. The goal is to start with the ‘must have’ rather than falling into the trap of over engineering the context layer.

Practical advice: The "reality check"

The app PM: Defining value and usability

The app PM must clearly articulate the outcome-driven requirements to the Knowledge PM through three lenses:

- Capabilities: What high-value capabilities must the agent possess to achieve the goal?

- Business values: What will actually move the needle for users or the business? Is this a foundation we can build on for future use cases, or is it a one-off ask?

- Usability: How accurate does the AI have to be to make it truly adoptable? How quickly can we drive tangible value for our organization?

The knowledge PM: Highlighting feasibility and risk

The knowledge PM must provide the reality of the technical foundation, helping the App PM understand the constraints:

- Feasibility (technical fit): Is the underlying data actually AI-ready? How accessible, structured, and trustworthy is the information for this specific use case?

- Risk tolerance: What are the known technical bottlenecks and their implications (e.g., data latency, security protocols, privacy constraints, or response times)?

By asking these questions and working towards a solution together, you stop being two PMs managing two different layers and become co-authors of a single, intelligent product.

Keep reading

Designing safe conversational AI: The risks product managers overlook

Surviving the perfect storm: How hardware PMs can beat the AI tax and trade tariffs

From repetitive work to real impact: A case study on building an AI recommendation for developers

Product managers' role in making AI/ML systems more relevant