This article is part of our AI Knowledge Hub, created with Pendo. For similar articles and even more free AI resources, visit the AI Knowledge Hub now.

Artificial intelligence (AI) has been sprinting forward at a mind-boggling pace recently, throwing up more questions than answers. I wrote a piece about the impact of AI on product management, one where I kind of shrugged and said “how about we chat again in 100 years?” Well, I’ve been reflecting on the points I made.

I’m still as clueless as I was about when or if AI is going to elbow in on roles needing a soft touch or heavy dose of critical thinking. Truth be told, the crystal ball has got even foggier. So here’s what I propose: let’s take a step back and see what’s been brewing since May.

In this article, I want to examine:

- Predictions and their tricky nature: Exploring the accuracy of past AI forecasts, and the factors that complicate these predictions.

- La La Land vs The Matrix: Influential voices on AI’s impact on employment: A comparative discussion on divergent perspectives about AI’s effect on the job market.

- When AI starts having memory: Delving into the latest developments in AI memory capabilities.

- When AI Can observe, reflect and plan: Understanding how AI’s ability to emulate human cognition is revolutionizing product management.

- The similarities between Smallville agents and product manager tasks: What are Smallville and Smallville agents, and how does the evolution of AI compare with the challenges and tasks of product management?

- Roadblocks for smallville agents on the path to AGI: Addressing the hurdles AI must overcome to reach true general intelligence.

As product managers, we navigate the complexities of our field armed with data-driven insights, yet we also appreciate the value of strategic decision-making in the face of ambiguity. I’ve curated a mix of research findings, articles, data points, and personal interpretations to bring this discussion to you. I eagerly await your perspectives — our collective discourse is what truly enriches the conversation.

Predictions and their tricky nature

Let’s go to 1964 when the Triple Revolution report landed on President Lyndon Johnson’s desk. Written by a brilliant team, including two Nobel laureates, this report warned that the US was teetering on the edge of economic and social chaos, with industrial automation ready to pink-slip millions. Fast-forward half a century and the sky’s still up there.

Today, tech giants, industry spectators, critics and scholars are wrestling with the repercussions of AI’s lightning-speed evolution on humanity’s future. However, making predictions is like tip-toeing through a minefield. As Kai-Fu Lee confessed, his 80s and 90s prediction of speech recognition being ready in ‘the next five years’ was way off the mark.

Sure, tech’s potential downsides are often spotlighted. But historically, technological advances birthed new roles, often more engaging, safer, and plumper in the wallet department than their predecessors. Technology may bulldoze entire industries and jobs, but it can also be the midwife to new sectors and career paths.

How can we tell if history will repeat itself or if it will be a different kettle of fish this time? Martin Ford gives a compelling example in his TED talk: Cars vs. Horses.

Horses saw their career prospects gallop off into the sunset with the advent of automobiles. You might argue, “Yeah, but horses were stuck in a rut of repetitive, inefficient labor, hence machines muscled in.” Hold your horses right there and let’s take another look at those machines.

These car ‘machines’ were also doing repetitive, not-very-brainy work — just more efficiently. It wasn’t the horses driving change, nor the machines — it was humans.

Zoom to 2023, and we’ve stirred up something way spicier: machines capable of processing language faster than a teenager texts, strategizing like chess champs, and reasoning like philosophers. Pitch me against an AI in any of those domains, and I’d likely end up eating dust. I bet you’re guessing where this is heading and you’re probably right to think, “Hey, there are other factors at play that will shape our future.”

My point here is, many have attempted to predict the AI impact because uncertainty about our future gives us the heebie-jeebies. Cue in fortune telling, or as fancy folks might call it, Laplace’s demon. This thought experiment proposes that if someone knew the location and momentum of every atom in the universe, they could predict the future using classical mechanics. But, Catch-22, this would require computational power exceeding all atoms and energy in the universe.

Given that we’re unlikely to amass that sort of computational firepower, our predictions often fall flat. Our innate biases and self-interest blind us to factors needed for accurate predictions.

Even though most predictions end up wide of the mark, I reckon we still manage to get some stuff right. There’s a limit to how accurately we can predict, but as they say, history doesn’t repeat itself, but it often rhymes.

LaLa Land vs The Matrix: Influential voices on AI’s impact on employment

One faction argues that AI will usurp our jobs and turn us into human batteries in a century or two, while the other lot is busy painting a brand-new world replete with rainbows and unicorns.

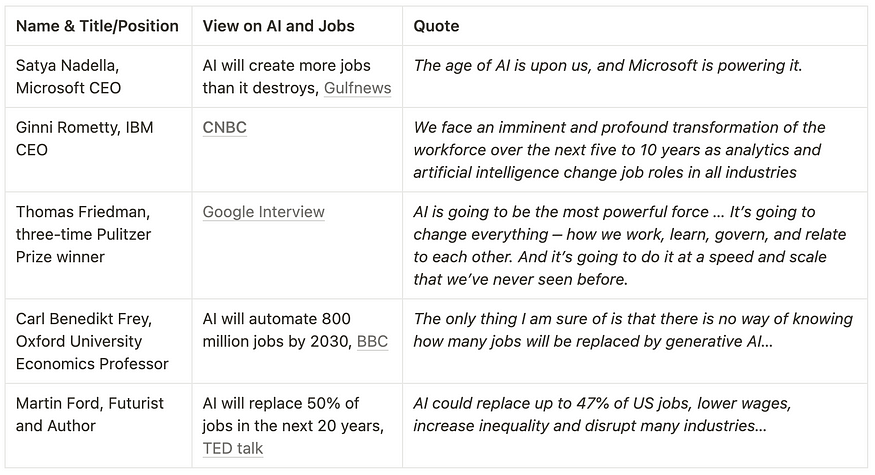

I’ve wrangled together a smorgasbord of recent quotes by influential voices on how AI might reshape the employment landscape. I urge you to delve into the linked documents or watch the attached videos yourself. This will help you shape your perspective, free from the coloring of mine.

The when and how of these predictions could be wide off the mark, but the consensus is that change is on the horizon. The speakers’ roles influence their opinions, with CEOs leaning towards the "Don’t look up" camp, focusing on the silver lining and their products’ potential for positive change. On the flip side, writers and scholars are more concerned with the potential impacts on our everyday lives, painting a cyberpunk-style apocalypse.

Many roles that are purely mechanical are gradually being outsourced to technology, to machines that do the job more cost-effectively. As we shift automation up a gear, many more jobs could become redundant, with machines taking over from humans. That seems unavoidable.

So, how do these predictions relate to you, a product manager?

Our jobs will likely weather the AI storm better than others, at least until AGI arrives. AI-powered tools like ChatGPT will enhance product management tasks such as market research and writing tickets, and they lack the ability to fully replace product managers.

But that’s considering GPT from a chat UI perspective. What about the underlying large language model (LLM)? Do GPT-4 or other LLMs have the potential to replace product managers? If not yet, is the day of reckoning closer than we’d like to think?

To explore these questions, I dived into AGI and ToM papers. Next, I want to share how machines are beginning to demonstrate capabilities like observation, reflection, planning, sensing, negotiation and more.

When AI starts having memory…

We all know that LLMs can be used for a variety of tasks, but the complexity of the task that can be performed is limited by the amount of memory that the LLM has. For example, an LLM with limited memory may not be able to generate text that is as long or complex as an LLM with more memory.

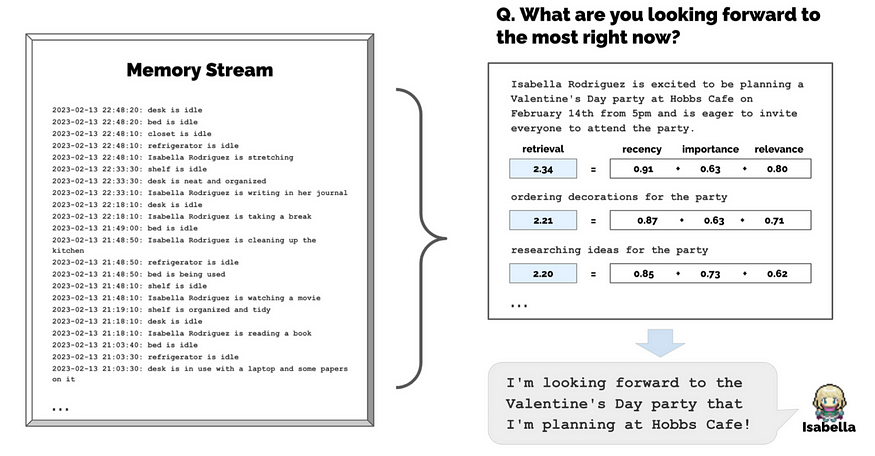

A paper “Generative Agents: Interactive Simulacra of Human Behavior” published in April 2023 looks into using LLM to simulate believable human behavior. This research ran on an interactive sandbox environment, Smallville, inspired by games like The Sims. It’s essentially a small town populated by 25 generative agents. These Smallville agents are designed to simulate human-like behavior, making them appear believable and relatable. They can remember, retrieve, reflect and interact with other agents, and they can plan through dynamically evolving circumstances.

The simulation is achieved by storing a complete record of their experiences using natural language, synthesizing those memories over time into higher-level reflections, and retrieving them dynamically to plan behavior. It demonstrated how generative agents are able to simulate believable human behavior. The agents can observe the world around them, plan their actions, and learn from their experiences.

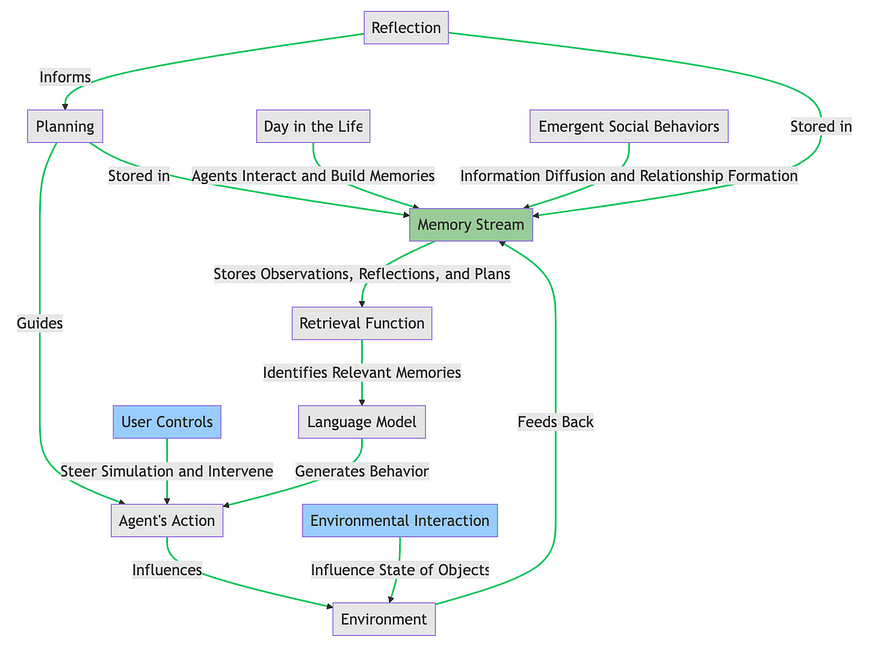

Here’s the Smallville agents memory stream process from my understanding of this paper.

In this diagram, the “Memory Stream” in green is the central component where observations, reflections and plans are stored. All other steps in the agent’s planning process are connected to this memory stream, but are shown in gray to emphasize the importance of the memory stream. The “User Controls” and “Environmental Interaction” blocks in blue, which represent prompts from humans, are highlighted to distinguish them from the steps driven by the AI.

Humans and the Smallville agents store memories in a similar way. We both store memories as a network of associated information. This allows us to reconstruct the past and make sense of the present. When we remember something, we are not simply retrieving a single piece of information. Instead, we are retrieving a network of associated memories. This network of memories allows us to reconstruct the event in our minds and to understand the significance of the event.

Think about when you forgot your umbrella on a rainy day. You might remember how wet and cold you felt, and how you had to walk home in the rain. You might also remember how frustrated you felt, and how you vowed to never forget your umbrella again.

The Smallville AI works in a similar way. When a virtual assistant (VA) AI remembers something, it is not simply retrieving a single piece of information. Instead, it retrieves a network of associated memories. This network of memories allows the VA AI to reconstruct the event and understand its significance.

How does the memory and retrieval of information affect the action and planning of AI?

When AI can observe, reflect and plan

The umbrella example demonstrates how an unpleasant memory could trigger future planning. You’ve learned from your mistake, and you create a reminder on your phone for rainy days to prevent a similar mishap. You observed, reflected, and planned an action — a process the Smallville AI agents can also perform.

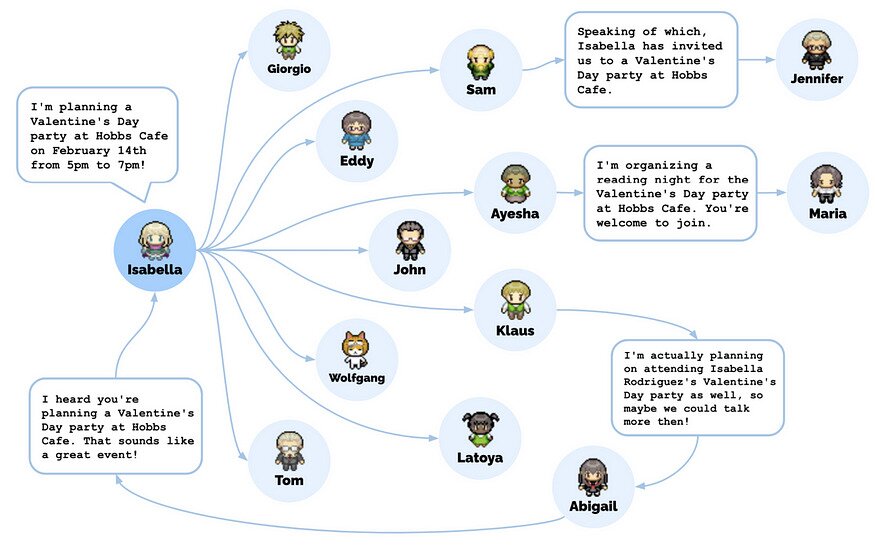

In an impressive display of these capabilities, the Smallville agent named Isabella managed to organize a successful party despite multiple potential points of failure. She remembered to invite other agents, who then had to recall the invitation and decide to attend.

This chain of events, triggered by a single user prompt, culminated in an AI-organized party that even included date invitations!

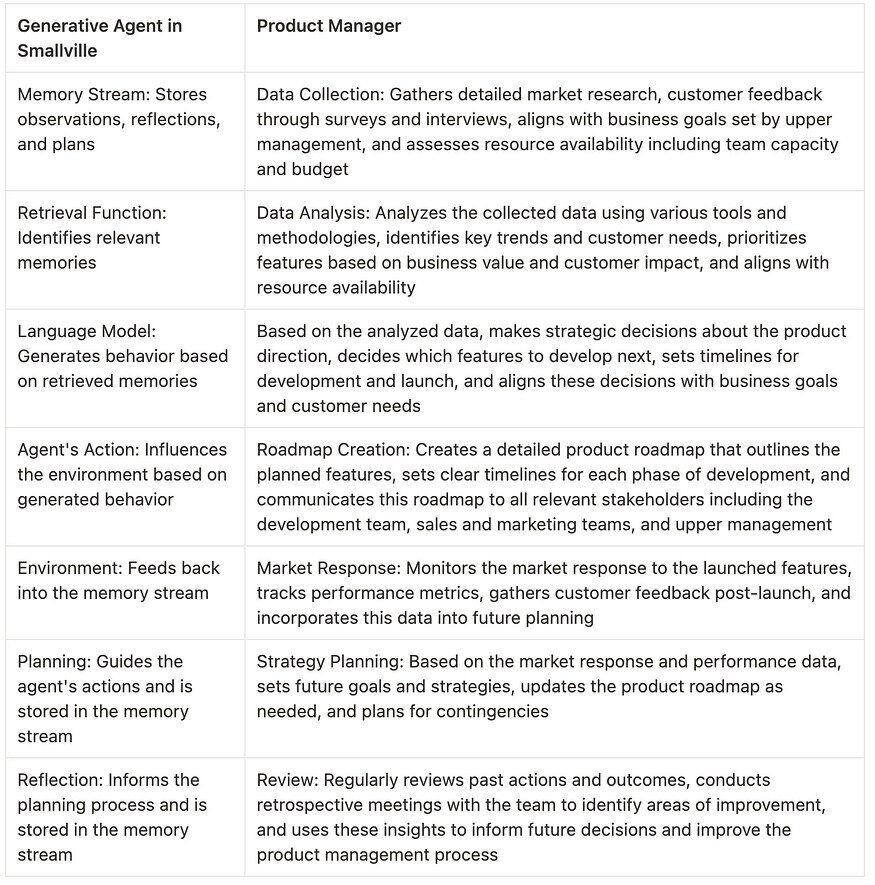

The similarities between the Smallville agents and product manager tasks

The similarity between the AI’s behavior and the tasks performed by a product manager is quite striking. Both roles involve decision-making, planning, and execution based on available information and memory. Here’s a comparative analysis to highlight the parallel workflows of Smallville’s AI agents and product managers:

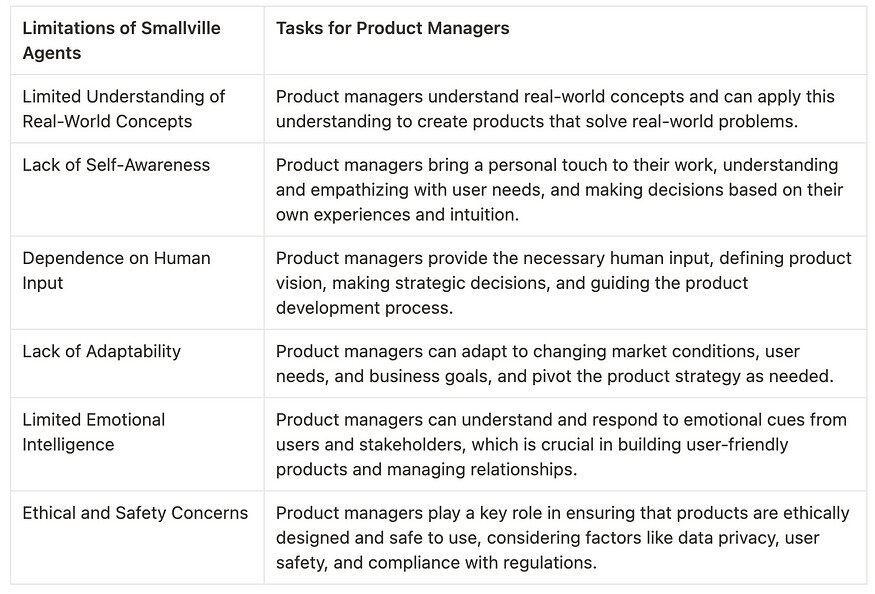

However, it’s crucial to note that this doesn’t signal the dawn of AI taking over product management roles. There are significant limitations to the capabilities of AI agents like those in Smallville that keep them from achieving Artificial General Intelligence (AGI).

Roadblocks for Smallville agents on the path to AGI

In my opinion, product management roles will only be in jeopardy when AGI is developed and widely integrated into the commercial world. The road to AGI, however, is riddled with complex challenges that surpass the capabilities of current technologies, despite the significant advancements represented by systems like Smallville.

Here’s a table highlighting where product managers are still indispensable in the process of creating effective IT products, juxtaposed against the limitations of Smallville agents:

This comparison underscores the importance of human involvement in any product development process, and how our roles complement the capabilities of AI systems like the Smallville agents. While we are waiting for true AGI and that the AGI becomes a commercial reality, the human touch remains an essential ingredient in the successful execution of product management tasks.

Conclusion

In closing, the evolution of artificial intelligence, embodied by LLMs and systems like Smallville, continues to astound us. The ability of these AI agents to observe, remember, and plan actions represents a significant leap forward in our quest towards true Artificial General Intelligence. But let’s not pack up our desks just yet. As product managers, our unique blend of strategic thinking, empathy, adaptability, and real-world understanding still sets us apart in this AI-driven landscape.

The marriage of human expertise with AI capabilities could potentially revolutionize the way we manage products. The key lies in finding the sweet spot where AI can augment our decision-making process, reducing biases, and improving efficiency, while we maintain the crucial oversight of strategy, ethics, and human-centric design.

So, as we keep an eye on the burgeoning field of AI and its potential impact on our roles, let’s also celebrate the distinctively human skills we bring to our craft. The era of AI is not a threat but an opportunity for us to level up, to combine the best of human intuition and AI precision, creating products that are more useful, intuitive, and delightful than ever.

Stay tuned for the next article, where we’ll continue to explore the dynamic relationship between product management and the ever-evolving world of AI. There’s much more to learn, and the future, as they say, is only just beginning.

References

- Revolutionizing Product Management with ChatGPT: A Deep Dive into How Chatbot Can Help Product Managers Succeed

- AI and the Human Future: Net Positive

- How we’ll earn money in a future without jobs | Martin Ford

- Laplace’s Demon

- The age of AI is upon us and Microsoft is powering it: Satya Nadella

- A conversation with Thomas Friedman about AI

- AI could replace equivalent of 300 million jobs — report

- Generative Agents: Interactive Simulacra of Human Behavior

Appendix

Based on my understanding of the Smallville agents here are the list of similar things I’d do when I was still a hands-on product manager:

- Memory stream: This is akin to the knowledge and experience I’ve accumulated over the years, including understanding of the product, market trends, customer feedback, and past project outcomes. This is constantly updated with new information.

- Retrieval function: When faced with a new challenge or decision, I draw upon relevant experiences and knowledge from this “memory stream”. This could be recalling a similar project from the past, or considering feedback from a recent customer survey.

- Language model: This could be compared to the decision-making process, where I use the retrieved information to generate a plan of action or strategy. This could involve creating a product roadmap, defining new features, or setting project goals.

- Agent’s action: This is the implementation of the plan, such as launching a new product feature, initiating a marketing campaign, or coordinating with the development team.

- Environment: This represents the market or business environment in which the product operates. The actions taken will influence this environment, such as changing customer perceptions, affecting sales, or shifting market trends.

- Planning and reflection: These are crucial parts of a product manager’s role. Planning involves setting future goals and strategies based on current information and predictions. Reflection involves reviewing past actions and outcomes to inform future decisions. Both of these are stored in the “memory stream” for future reference.

- User controls and environmental interaction: As a product manager, I also have to interact with various stakeholders (users) and influence the product environment. This could be through user research, stakeholder meetings, or negotiating with vendors and partners.

- Day in the life and emergent social behaviors: These represent the daily interactions and relationships that form in a professional setting. Building relationships with team members, stakeholders, and customers is a key part of a product manager’s role.

Here are the existing limitation of the existing agents and why they are still not AGI, and not replacing product managers:

- Limited understanding of real-world concepts: While Smallville agents can simulate complex interactions and behaviors, their understanding of real-world concepts is limited to the data they’ve been trained on. They may not fully comprehend abstract concepts or nuanced human experiences.

- Lack of self-awareness: AGI implies a level of self-awareness and consciousness that Smallville agents do not possess. They don’t have a sense of self or personal experiences, and their “reflections” are based on programmed responses rather than genuine introspection.

- Dependence on human input: Smallville agents rely heavily on human input for their actions. They need prompts from humans to perform tasks and their behavior is guided by the language model, which is trained on human-generated text.

- Lack of adaptability: While Smallville agents can learn from their memory stream and adjust their behavior accordingly, their ability to adapt to new and unforeseen situations is limited. AGI, on the other hand, would be expected to handle novel situations with the same proficiency as familiar ones.

- Limited emotional intelligence: Smallville agents lack the ability to understand and respond to emotional cues in the way humans do. This could limit their effectiveness in situations where emotional intelligence is crucial.

- Ethical and safety concerns: The paper mentions that there are ongoing efforts to ensure the safety and ethical use of Smallville agents. However, like all AI systems, there are inherent risks and challenges associated with ensuring that they behave ethically and do not cause harm.