Lean Product Managers pride themselves on knowing the right time to deploy a fast experiment to validate a hypothesis and gather user feedback. Prototypes, A/B testing, and landing page MVPs are all popular ways to check if you’re headed down the right track with your product ideas.

There are two others that I’ve found very useful, not only for launching new products but for testing features before they are complete. These tests are known as “Wizard of Oz MVP” and “Concierge MVP.” I want to talk through these not only to introduce them to people who haven’t tried them yet, but to differentiate them. Even among product intelligentsia, they tend to get mixed up.

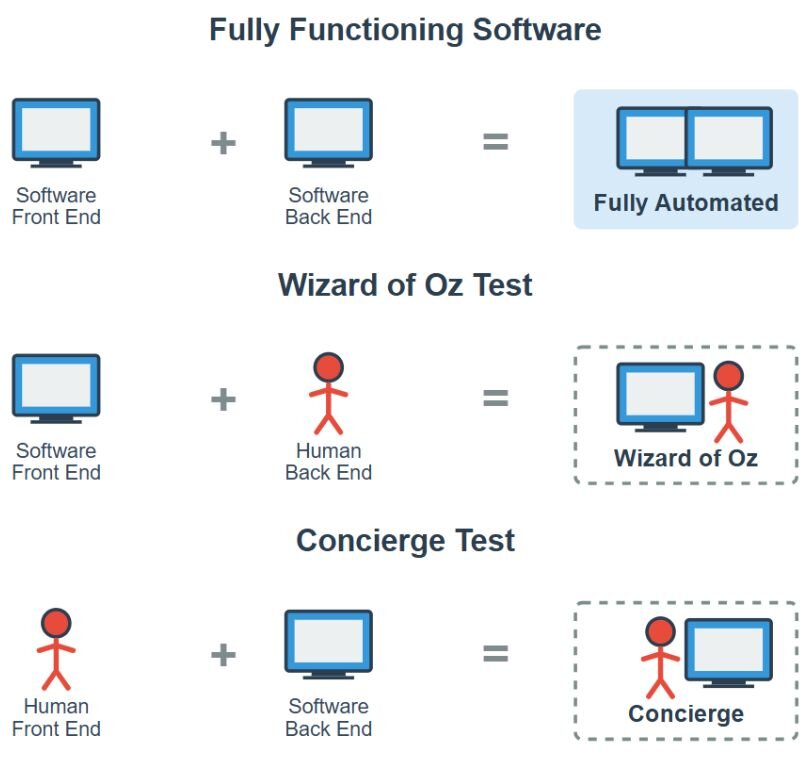

The Wizard of Oz test and the Concierge test both examine a product or feature idea, often with a partially implemented software solution, against the reactions of real users. The idea is to get fast feedback before you invest in building out the rest of the product—or, realistically for many of us in larger enterprises, while you are blocked on building out a part of the product. However, the two experiments have different starting points: with a Wizard of Oz test, you are testing the front end. With a Concierge test, you are testing the back end (or at least a concept for one).

With a Wizard of Oz MVP, you have built a front end, but you are substituting manual work for the back end (or the integration to an existing back end). The name comes from the scene in the film where Toto exposes that the great and powerful wizard is really just a man hiding behind a curtain, pushing buttons and pulling levers. In a Wizard of Oz MVP, you are the “wizard,” or the back end.

In a Concierge MVP, the opposite is true. You may have some sort of back end or engine built, but no user interface. Here, we take inspiration from the concierge desk at a hotel, where an employee takes requests from a guest and performs actions for them, such as ordering tickets to a Broadway show and arranging a cab ride. In this case, you are the “concierge,” or the front end, taking inputs from the user and taking actions on them yourself, with or without the help of a backend system.

In other words:

- “I don’t have my back end yet, but I have a screen I want to test out” = Wizard of Oz.

- “I want to test my back end system but I don’t have a screen to connect it to” = Concierge.

Concierge: Human front end, software back end

Let’s talk through an example to see the difference. You are the product manager for a health insurance patient-facing application. You are building out a feature to help users find a practitioner who is accepting patients. There are some third party databases you can use, and you’ve identified one you might like to contract with. Their sales team has given you temporary access to their full database to try it out, but you have not signed a contract yet and don’t have API keys.

First, before the interface is built, you want to validate that the database is providing useful information to your end users. So you find a couple of test users who are actually looking for new PCPs and set up interviews with them. During the interviews, you ask them questions about what they are looking for: location, level of experience, specialties. Your team runs some SQL queries on the database and pulls up some matches. You talk through the results with the patient and give them the contact information for the providers that they are most interested in.

One of the users you interview is interested in knowing specifics about the doctors’ credentials, such as what schools they attended. However, this database doesn’t have that piece of information. Strike one! The other user is less picky and is happy with the three options. However, when you follow up with your subject afterwards, they are dissatisfied. Of the three numbers you gave them, two of them were for doctors who were not actually accepting new patients, and the third was a wrong number.

Learnings from the Concierge test:

- The database doesn’t have all the data points our users might want.

- The data it does have may be inaccurate.

What to look for going forward:

- What data do users usually want when selecting a provider?

- What is the vendor’s process for updating and verifying data?

Without having built any software ourselves, we’ve uncovered some risks and assumptions that hadn’t been exposed, and can make a plan from here.

Wizard of Oz: Software front end, human back end

Let’s fast forward a few weeks. You’ve settled on a much better vendor and have signed a contract with them. You’re excited to finally build out this feature–but you’re blocked. Your IT team has to do some configuration before you can have API access in production.

But the lean product manager is not so easily foiled! You decide to start building out the feature as a Wizard of Oz MVP. Your team rolls out the user interface for the web portal to a subset of users.

“But a front end that’s not connected to anything isn’t valuable!” the lean product manager interjects. “You should be thinking in vertical slices, not horizontal.”

“Who said it’s not connected to anything?” the author retorts.

When your users open the new provider finder tool in your web portal, they can submit all their preferences. There are drop-down menus, location services, check boxes and radio buttons. Then they click submit. But instead of a screen with their results, they see a notification that tells them they will receive an in-app message with their results shortly.

Meanwhile, your software has sent your team a (secure, HIPPA-compliant) message with the user’s inputs. You go through the same process as before, running queries manually against the database. Then you copy and paste the results into the message system and send. The user gets the message and never knows that it wasn’t automated.

Not only have you unblocked this feature, but you have enabled yourselves to test it with real users. You can see from your analytics tool where users are falling off the process. You’ve even noticed a few fields where people are inputting the wrong data.

Learnings from the Wizard of Oz test:

- Users are dropping off the process between receiving search results and clicking into provider records.

- Users are inputting city and state in the zip code field.

What to look for going forward:

- Why don’t users open the detailed records for the providers? Do they not see the results they want? Or do they have all the info they need on the results list page?

- Do users prefer to search by city and state, or is the zip code field just poorly labeled? Should we be using location services?

Testing the screen early gives us an opportunity to make UX improvements with real users even before the feature is complete. By the time we are able to integrate, we’ve perfected our front end.

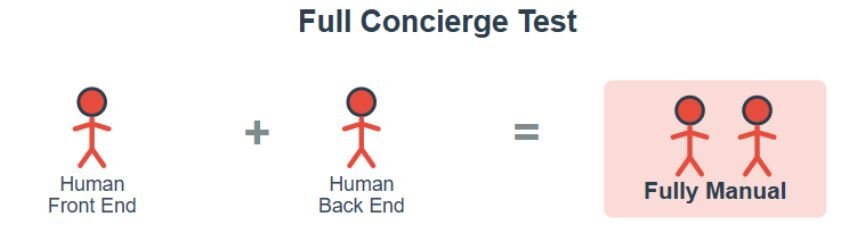

Full concierge: All human, no software

In most cases I have used these approaches, there is some level of software already built (either front or back end). However, this does not need to be the case. The purest form of concierge testing involves no specially made or purchased software.

Let’s return to our example. Again, you are thinking of building a feature to help users find a provider. But let’s say you don’t have a 3rd party database—in fact, you don’t even know if your users want this feature at all. You want to validate that there’s any product-market fit within your current client base before going further.

Here, you start from the same type of interview as with the previously discussed Concierge test: you ask users what they are looking for and gather their preferences. But instead of running a SQL query, you whip out the phone book and start calling around. Or maybe you do some quick Google searches through local hospital websites. You give the results to the users and follow up with them, just like above. But in this case, you are looking for much broader feedback—not about specific data points and accuracy, but about whether the users are interested in this feature at all. Perhaps one of them goes along with the test and seems happy with the result, but the other isn’t interested because they only want personal recommendations from friends and family.

In other words:

- “I don’t have any software built, but I want to validate my concept” = Full Concierge.

This is not as realistic a scenario as the others, because a patient using a search tool to find a doctor is a well established use case. However, if you are considering a more experimental idea—connecting brides with botanists to create new hybrid flowers for their weddings, for example—then it makes more sense to test out your product viability before building.

Wizard of Oz and Concierge are just two types of lean product experiments that help us learn early, whether it’s to validate a product idea before investing in a build out, or to keep testing features while blocked by the many moving pieces in a large organization. The important thing is to always be gathering data, testing assumptions, and moving your team forward. Whether you’re doing that from a hotel desk or behind a curtain is up to you.

Further reading:

- The founding of Zappos is the most well-known example of the Wizard of Oz test. This article tells the full story.

- This article explains the basics of a Concierge MVP and includes the example of Wealthfront’s personalized investment recommender.

- For my bookworms, O’Reilly’s The Lean Entrepreneur by Brant Cooper and Patrick Vlaskovits introduces these concepts and many others.