As AI becomes a standard part of more products, I’ve seen a recurring pattern: many of the hardest problems aren’t about building better models. They’re about helping users find the right content, at the right time, in a way that feels genuinely useful and trustworthy. These challenges—often grouped under “relevance”—show up repeatedly in real product decisions, but they’re not always discussed explicitly in traditional AI PM conversations. I spend most of my time thinking about how AI systems understand user intent, how content is represented, and how product decisions compound at scale.

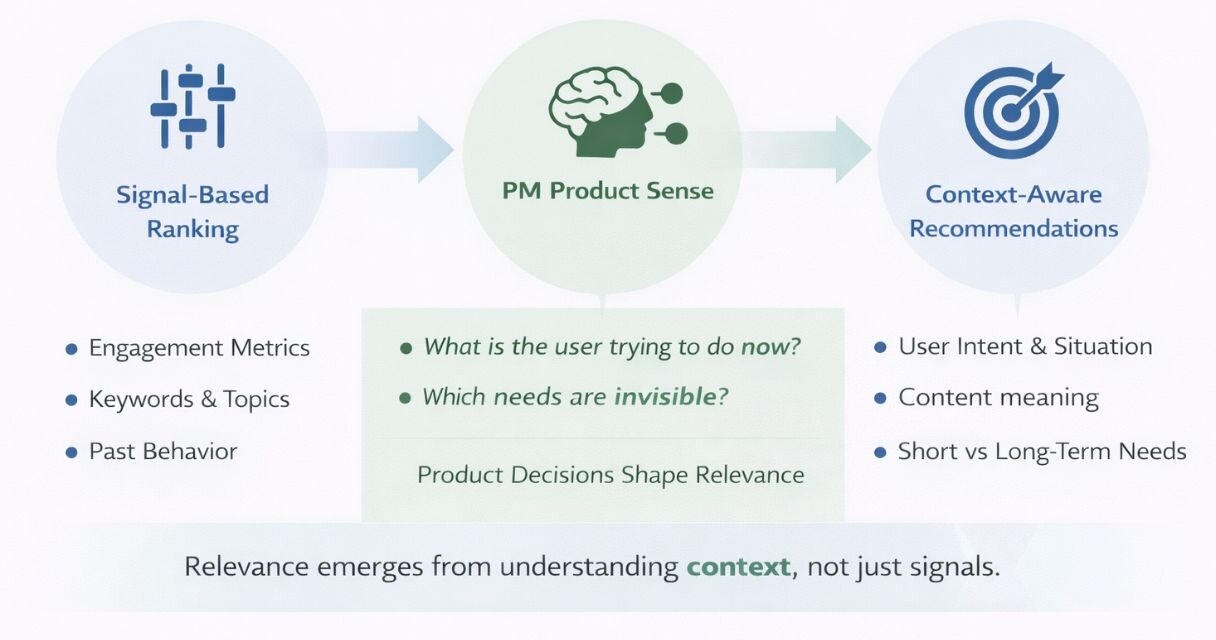

In this article, I’ll share my take on why relevance breaks even when models perform well, why ranking optimization eventually plateaus, and how product managers can think differently about designing systems that understand context—not just behavior. To ground these ideas, I introduce a simple framework drawn from real product work that explains how relevance systems evolve from signal-based ranking to context-aware recommendations, and where product sense plays a critical role.

1. Why relevance problems show up even when the models perform well

For a long time, traditional product management in AI-driven systems followed a familiar loop: define goals, improve ML models, ship ranking gains, and watch metrics move. In many cases, that approach worked.

Until it didn’t.

In several large-scale ML-recommended products I’ve worked on, we reached a point where models were objectively strong. Offline metrics looked healthy. Online experiments showed incremental lifts. And yet, user feedback became increasingly consistent: content discovery became repetitive, recommendations felt slightly off, and people struggled to find content that actually matched what they were looking for.

What made this difficult was that nothing was obviously broken. Engagement didn’t collapse. Dashboards looked fine. But over time, we saw patterns like:

- Users engaging intensely for a short period, then disengaging.

- High-quality content performing extremely well once users found it, but rarely being shown.

- Content producers creating valuable content that clearly met demand, yet failing to get distribution.

After many iterations we discovered that the issue wasn’t really model quality. It was relevance. More specifically, the system learned to represent content faster than it learned to understand user intent.

2. Why ranking optimizations eventually stops helping

When relevance drops, the default reaction is optimization. Tune features. Add signals. Adjust weights. Improve recall. I’ve done this many times, and in the early stages of relevance lifecycle it works. But at scale, ranking improvements hit diminishing returns quickly.

The reason is simple: ranking assumes the system already understands users and content reasonably well. In practice, that assumption breaks down. Users don’t have a single intent. Content doesn’t fit neatly into labels. And engagement signals often reflect habit or convenience rather than satisfaction.

I’ve seen systems where we improved ranking precision month over month, yet users still described the experience as “not quite right.” When we dug in, the problem wasn’t that the system was scoring content poorly. It was that it didn’t clearly understand what the user was trying to accomplish at that moment.

Traditional signals—clicks, dwell time, likes—are useful, but they’re proxies. At scale, they introduce blind spots:

- Users consume content they don’t actually value.

- Passive behavior overwhelms explicit feedback.

- Minority or long-term interests disappear into averages.

- New or niche content struggles to surface.

At that point, more optimization doesn’t fix the experience. Better understanding does.

Once we accepted that ranking wasn’t the constraint, the real question became whether the system could understand users’ context, not just behavior.

3. From signal-based ranking to context-aware recommendations

This is where recent advances in large language models (LLMs) start to matter in a practical way.

Instead of only asking, “What did the user click?”, we’ve now started asking:

- What is this content actually about?

- What kind of need does it serve?

- How does it relate to the user’s broader interests or current context?

That shift unlocked new ways to reason about product problems.

For example: on one of the surfaces I work on, we noticed a recurring pattern- certain posts consistently drove high completion, saves, and follow actions after users saw them—but impressions remained stubbornly low. We initially treated this as a ranking problem and experimented with boosting and feature tuning. The gains were short-lived.

When we stepped back, the issue became clearer. The system understood the topic of the content, but not the user situation it was most relevant for. The same content was being evaluated as broadly interesting, when in reality it was highly valuable only in specific contexts—early exploration, problem-solving moments, or after a related interaction. Once we reframed this as a representation gap rather than a ranking gap, we focused on improving how the system modeled user intent and context. Distribution improved naturally, without ongoing manual intervention.

Relevance improvements start from ambiguous feedback like, “Recommendations don’t get me.”

That statement isn’t actionable by itself. The PM’s job is to structure it:

- Is this a topic mismatch?

- A depth or expertise mismatch?

- A timing or context issue?

If a human can explain why something feels wrong, when done right the system can often be taught to approximate that judgment. LLMs help by making previously implicit meaning easier for systems to work with. The leverage still comes from the PM’s product sense.

4. The relevance PM mindset (and how PMs can apply it)

The best relevance-focused PMs don’t see themselves as feature owners or model tuners. They think about the ecosystem value—and that mindset shows up very clearly in the kinds of decisions they make.

In my experience, relevance work is most effective when PMs step back from optimization and focus on what the system fundamentally understands about users and content.

For example, across several new product launches, we saw the same pattern: engagement looked healthy immediately after onboarding, but users gradually disengaged as the “Novelty effect” wore off. Ranking improvements move metrics slightly, but don't change the underlying pattern.

What makes the difference isn’t another ranking tweak. It comes from re-framing the core user problem around intent. We realized the system treated early user signals as long-term preferences, causing it to overfit to short-term curiosity. By adjusting how we represented early intent versus sustained interest, discovery started to feel more aligned, and repeat engagement stabilized over time.

Relevance PMing also shows up in how vague feedback is handled. I’ve seen teams struggle with broad complaints like “recommendations don’t feel useful.” In practice, the breakthrough often came from separating that feedback into distinct failure modes—content’s depth mismatch, timing mismatch, or intent mismatch—rather than treating it as a single quality problem. Once those differences were explicit, the system could learn in more targeted ways.

Across these experiences, the common thread was the same: progress came not from pushing the system harder, but from helping it understand the context and see more clearly.

Relevance PMs spend disproportionate amounts of time on:

- Framing the right problem before optimizing.

- Identifying which user needs are invisible or poorly represented.

- Examining where system assumptions break down.

As opposed to popular belief, none of this requires you to write model code as PMs. It requires product sense, user empathy and deciding what the system should care about, what it should ignore, and how it should learn.

Keep reading

Designing enforcement as user experience: A case study in PAYG lending

Building products in Africa: Lessons from PMs working on the continent

Behind the seamless OpenTab: How a simple ordering feature turned into a scalable payments system

What I learned from building products in highly regulated environments