I'm an external design partner. I embed with product teams at companies like Deutsche Telekom and IQVIA. I have opinions about what needs fixing. I have zero authority to make anyone do anything. The irony of someone with no power writing about influence isn't lost on me.

If I want engineering to prioritize UX debt over features, I can't just say "the dashboard is confusing." I need to translate that into something they'll actually listen to. Like: "Users spend 4 minutes trying to find export. That's 200 wasted hours per month across your user base. Your competitors let them do it in one click."

Turns out the tactics that work for external consultants are the exact same tactics internal designers, researchers, and product ops people use when they need to influence product decisions but don't own the roadmap. Which is most of them. Unless you're a founder-PM. Then congratulations, you get to overrule yourself.

Translate design problems into product problems

PMs don't care that "the navigation is inconsistent." They care that feature adoption is 8% when it should be 40%.

Deutsche Telekom had an internal data hub. Multiple national companies used it. Adoption stuck at 25% even though it was mandatory. Teams were filing tickets asking for the data manually instead of using the tool. That meant IT was running manual queries, project decisions were delayed by days, and nobody trusted the platform they'd spent millions building.

I could have said "the interface violates cognitive load principles" or "users experience decision paralysis." True. Also useless.

Instead: "Your teams are avoiding this tool. That delays data-driven decisions by three days per project. That's costing you velocity across every company using this."

Rebuilt navigation. Adoption hit 68% in three months. Same tool. Different interface.

My native language is "this user flow is a disaster." That doesn't get things prioritized. What works: connecting UX issues to metrics PMs already track – activation, conversion, support volume, time-to-value.

Find the metrics they're already watching

Engineering tracks deployment frequency and bug rates. PMs track activation and trial-to-paid conversion. Everyone's already watching something. Most of them obsessively.

I don't invent new metrics to prove UX matters. I just connect UX problems to the numbers people check every Monday morning.

Example: A team had their export feature buried four clicks deep. Support answered "where's the export button" 15 times per day. 150 tickets per month. One button placement.

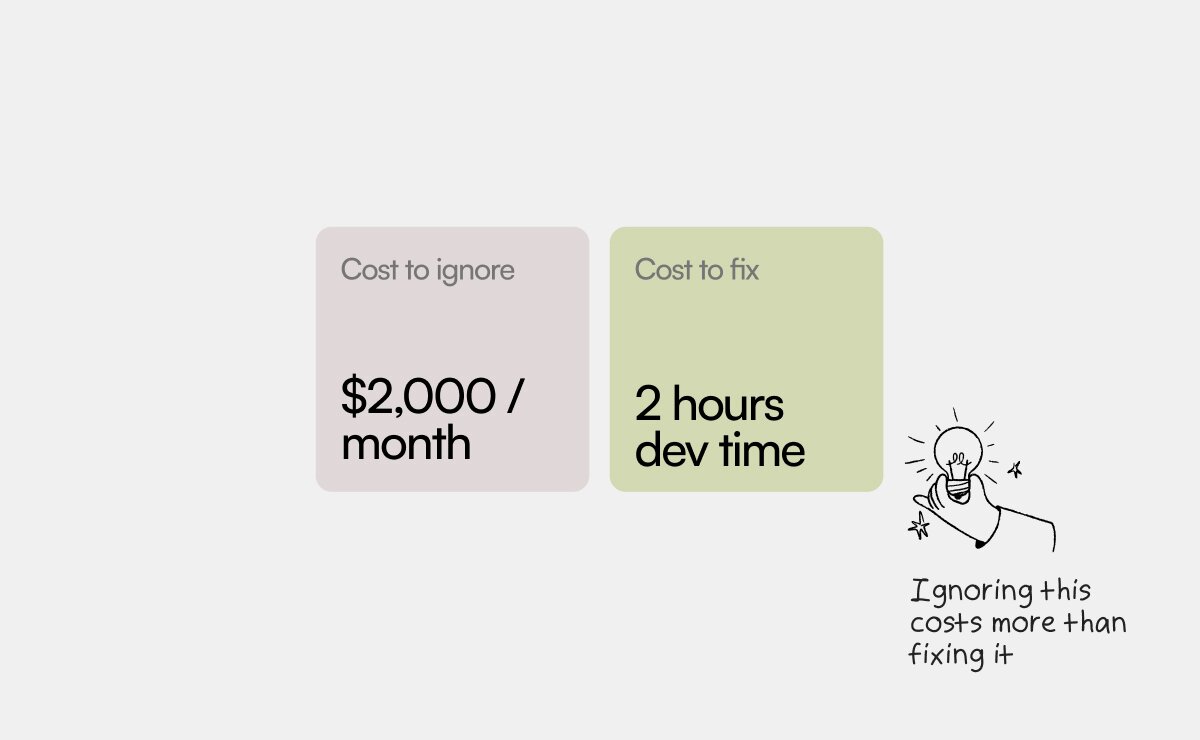

I didn't say "information architecture doesn't surface core functionality." I said "Your support team spends 50 hours per month explaining where one button is. That's $2,000 monthly. $24,000 per year."

Moved the button to the dashboard. Tickets dropped 92%. Support got 50 hours back. Same feature, different ZIP code.

Make fixing it cheaper than ignoring it

"This is costing us activations" is abstract. "This costs $2,000 monthly in support time" is budget they're already spending.

When you can show what not fixing something costs – in actual money, not theoretical user frustration – priorities shift. Not always. Not immediately. But engineering stops saying "not this sprint" and starts asking "how long will this take?"

What you're really showing: support tickets you won't get, hours you won't waste, customers who won't leave. Then compare that to hours required to fix it. If fixing it costs less than ignoring it, the decision makes itself.

I worked with a SaaS product where password reset failed 22% of the time. Design issue: the success message looked identical to the error message. Just different text. Users couldn't tell if it worked. They assumed it didn't. Because of course they did.

Engineering said it was low priority. "Only affects 22% of resets."

I reframed it: "22% of people trying to log in can't. That's 300 users per month who might churn before they ever use the product. Fixing it is two hours of CSS."

Shipped next sprint.

Offer the smallest possible fix first

Never lead with "we need to redesign the entire dashboard." Lead with "let's move this one button and see if adoption improves."

Big proposals die in planning meetings. Small fixes ship while people are still arguing about the big ones.

I worked with a team whose dashboard had 47 different options across multiple tabs. Feature adoption was abysmal. I could have proposed a complete information architecture overhaul. Six weeks of work. Requires buy-in from five stakeholders. Gets scheduled for next quarter, maybe.

Instead: "Let's move your three most-used reports to the top of the dashboard. Should take a day. If that's what people actually need, we'll see it in usage data."

One day of work. Usage of those reports went from 11% to 34% in two weeks.

After that, the PM asked what else was broken. Small wins build trust. Trust builds influence. Also: nobody wants to commit to six weeks of work when they don't trust you yet.

Know when you've lost and what to do instead

Sometimes the answer is no. The PM has other priorities. Engineering is focused on shipping features. Leadership wants growth over polish.

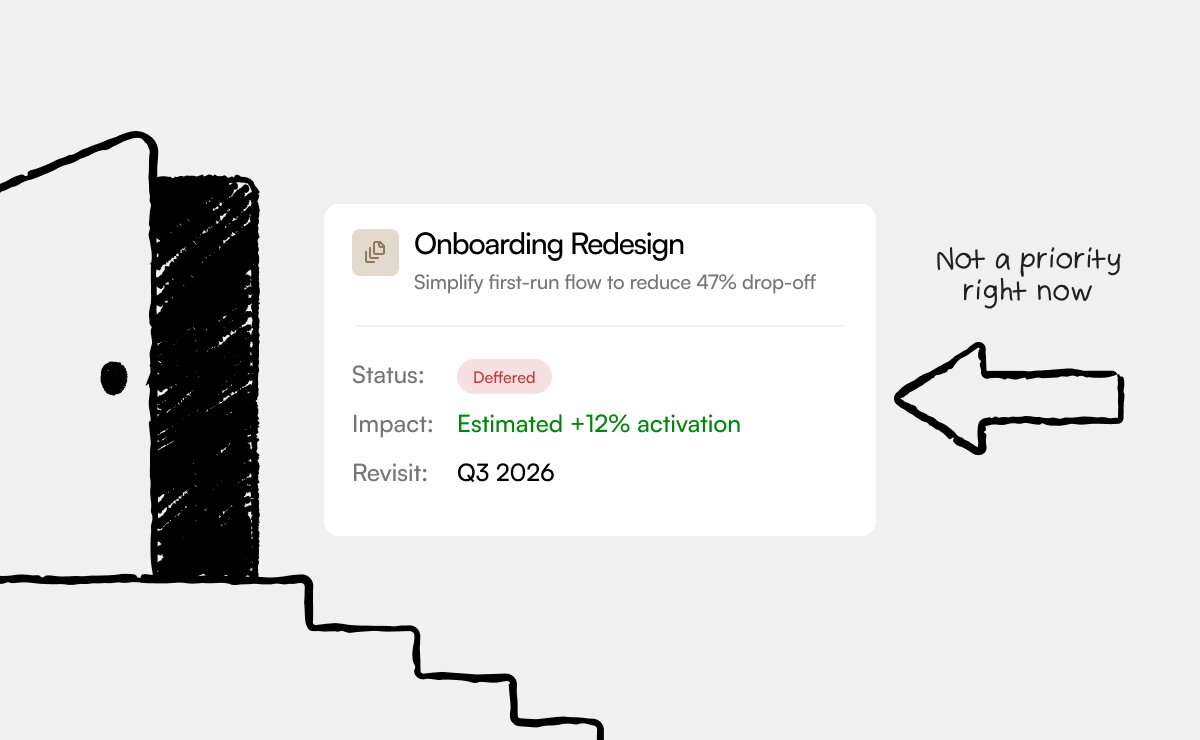

You can keep pushing. Or you can document your recommendation and move on.

I learned this the expensive way. Four years ago, I spent six weeks trying to convince a SaaS company that their onboarding was broken. Showed them the drop-off data. Explained the UX problems. Proposed solutions. They kept saying "let's revisit next quarter."

After six weeks, I realized: they weren't saying no because my argument wasn't good enough. They were saying no because fixing onboarding wasn't their priority. No amount of better arguments would change that. I was just annoying them.

Now when I hit resistance, I document the recommendation, explain the cost of not fixing it, and let it go. Sometimes they come back three months later when the problem gets worse. Sometimes they don't. Either way, I'm not spending six weeks pushing a boulder uphill.

Knowing when to stop is a skill. Staying professional when you disagree is more important. I might not get this fix prioritized. But if I handle the disagreement well, I'll get the next one.

What doesn't work (and why we keep trying it anyway)

"Users are confused" – Too vague. Which users? Confused about what? How do you know?

"Best practices say..." – Nobody cares what best practices say. They care what their metrics say.

"I did a heuristic evaluation" – Sounds like homework. Sounds like theory. Doesn't sound like a business problem.

Design by committee – Four stakeholders trying to agree on button placement. Seventeen opinions. Three weeks of discussion. Zero decisions. I walked away from a $40K contract because of this. Some lessons are expensive.

They work in design school. They work in design critiques. They work when you're talking to other designers. They don't work when you're trying to influence product decisions.

Influence without authority means speaking their language, not yours.

"The navigation violates Hick's Law" doesn't get UX debt prioritized. "Feature adoption is 8% because users can't find it" does. Same problem, different language. One sounds like you're talking to them. The other sounds like you're talking to yourself.