Every meaningful product improvement starts with a small but persistent frustration. In my case, it wasn’t a system outage or a missing feature—it was watching highly skilled developers spend hours doing the same work, again and again.

While working closely with enterprise customers on an integration platform—particularly in healthcare and retail—I began to notice a consistent pattern. These organizations were running hundreds of integrations across ERP systems, cloud applications, and external partners. The business context varied, but the technical foundations were strikingly similar.

Developers repeatedly followed the same steps: defining source and target data structures, creating mappings for familiar entities like customers, orders, and invoices, applying standard validations, and implementing well-known error-handling and retry logic. Despite this repetition, most integrations still started from scratch. Valuable engineering time was spent rebuilding foundations instead of delivering business-specific value.

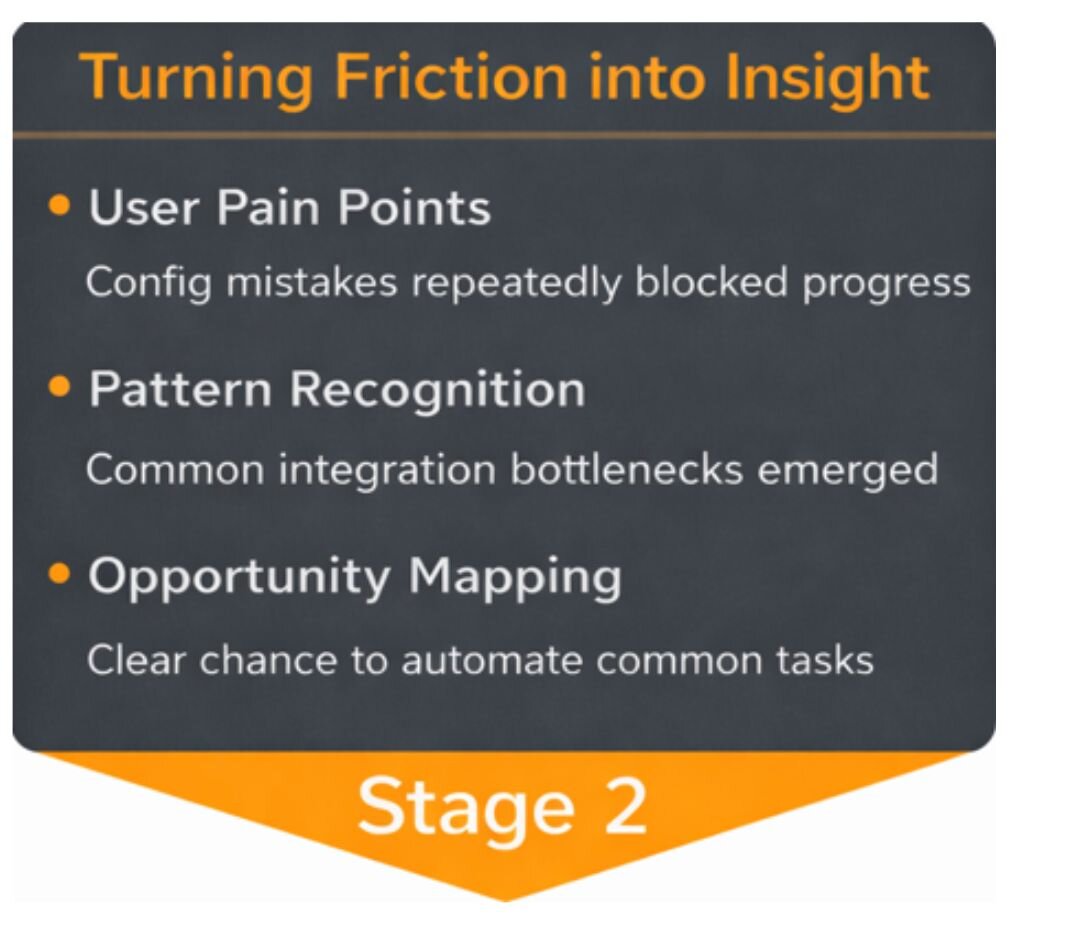

The Real-World Signal: Support Data and Customer Conversations

The first clear signal emerged from support trends. A meaningful share of recurring tickets—especially from newly formed teams—weren’t related to defects or outages. Instead, they pointed to setup issues: data connected incorrectly, flows not following intended patterns, and teams unintentionally drifting from recommended usage.

Customer conversations reinforced what the data suggested. One large healthcare organization explained that onboarding new integration developers routinely took weeks—not because the platform was difficult, but because teams had to recreate the same mappings and configurations for every new project. Senior engineers became bottlenecks, spending their time reviewing and correcting foundational setup work instead of focusing on architecture or innovation.

A national retail customer processing millions of transactions daily shared a similar story. Early project delays were rarely caused by complex business logic. Instead, timelines slipped due to configuration rework—fixing patterns that were already well understood but manually rebuilt each time.

At that point, it became clear this wasn’t a training or documentation gap. It was a signal that the product itself needed to do more of the heavy lifting.

Turning Friction into Insight

As these signals accumulated, the underlying issue came into focus. The problem wasn’t clarity—it was responsibility. Developers weren’t struggling because they lacked knowledge; they were struggling because the platform expected too much manual setup before meaningful work could begin.

This reframed the product questions entirely. What if the platform could recognize what a developer was trying to build and automatically set up the basics? How much delivery time could be reclaimed by removing repetitive, low-value configuration work? And could common mistakes be prevented by guiding users upfront, rather than correcting them later through support?

To validate these questions, I moved beyond anecdotal feedback. I analyzed platform telemetry across more than 2,000 customers, reviewed support ticket trends, and spoke directly with teams about their day-to-day workflows. The patterns were consistent. Over half of new integrations began as copies of existing ones, with developers reusing prior mappings and configurations simply to avoid starting from a blank canvas. Several teams estimated that 60–70% of an integration’s initial structure followed the same pattern every time, regardless of the business scenario.

“When experienced developers consistently repeat the same steps across projects, it’s rarely a user problem. It’s a sign the product itself should take on more responsibility”.

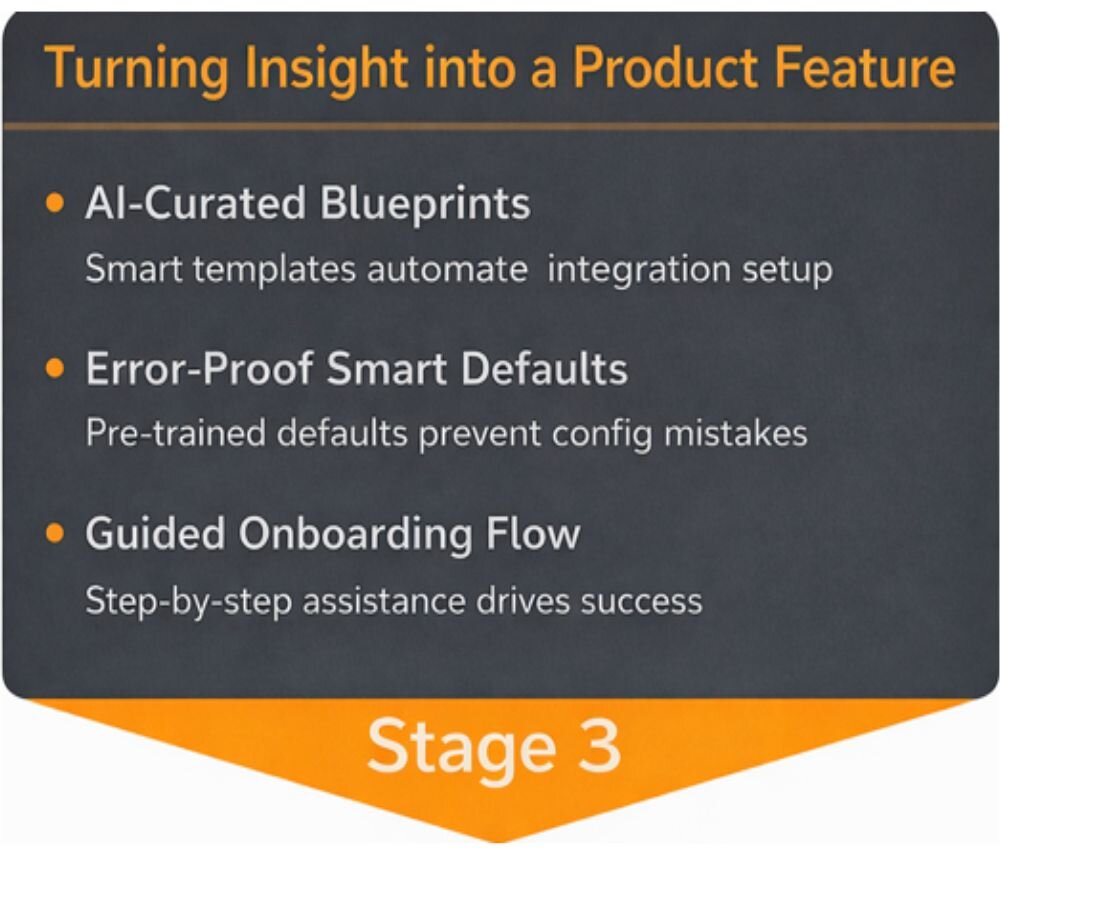

Turning Insight into a Product Feature

At the same time, AI capabilities—particularly around contextual understanding and code generation—had matured enough to fit naturally into real developer workflows. This wasn’t about replacing developers; it was about removing repetitive, low-value work that slowed them down.

I worked closely with engineering and architecture teams to assess where AI could add value without increasing complexity or risk. Together, we reviewed common integration patterns and identified the moments where developers consistently paused—searching documentation, copying from previous projects, or requesting reviews on basic setup decisions.

Rather than applying AI broadly, we focused on these predictable, high-friction moments. Through early design discussions and lightweight prototypes, we tested whether the platform could infer developer intent from a small set of inputs and generate a reliable starting point.

This led to a clear product direction: an AI Recommendation that understands what a developer is trying to build and automatically generates integration scaffolding, mappings, and configuration guidance based on proven patterns—while keeping developers in control of the final outcome.

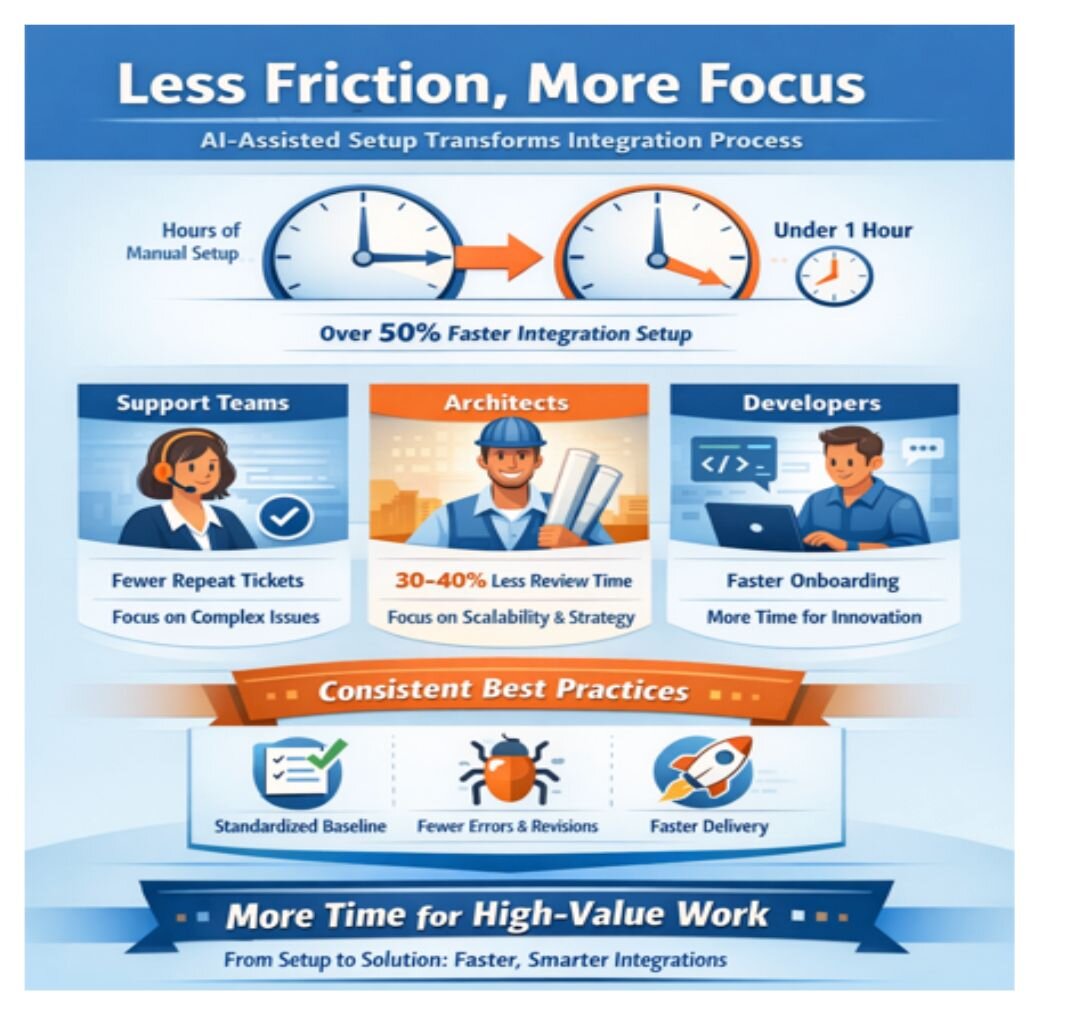

Outcome: Less Friction, More Focus

Early customer feedback validated the approach. One enterprise healthcare customer reported that the time required to bring a new integration to a working baseline dropped by more than half after introducing AI-assisted setup. What previously took several hours of manual configuration could now be completed in under an hour, with far fewer early revisions.

The impact extended beyond development teams. Support organizations observed a 20 % reduction in repeat tickets related to basic setup issues during new project kickoffs and developer onboarding. Instead of repeatedly troubleshooting the same configuration problems, support engineers were able to focus on more complex, customer-specific scenarios.

From a senior architect’s perspective, the change was equally significant. Early design reviews became shorter and more focused, with architects estimating a 30–40% reduction in time spent on foundational corrections. Because new integrations consistently started from a standardized, best-practice baseline, there was less rework during testing and fewer late-stage surprises. Architects were able to focus on higher-value concerns such as scalability, resilience, and long-term maintainability.

Teams also reported smoother onboarding. New developers reached productivity faster because best practices were embedded directly into the starting point rather than learned through trial and error. This improved consistency across projects and reduced reliance on senior engineers as gatekeepers for basic design decisions.

Most importantly, developers redirected their time toward higher-value work. With less effort spent on initial setup, teams focused more on business-specific logic and innovation—delivering integrations faster without sacrificing quality or operational confidence.

What I Learned as a Product Manager

What I Learned as a Product Manager

This journey—from observing repetitive developer workflows to introducing AI in a way that earned trust—wasn’t driven by a single insight, but by a series of product decisions grounded in real usage and feedback. The lessons below reflect how those decisions shaped both the product and my approach as a Product Manager

Repetition is a signal

When users repeatedly perform the same work across different contexts, it’s rarely a user problem—it’s product feedback. In our case, repeated manual setup steps signaled unnecessary friction. Treating repetition as a design smell helped us identify where the product itself should take on more responsibility

Support data is product discovery gold

Some of the most valuable insights came not from roadmap discussions, but from support tickets. These surfaced moments of confusion, hesitation, and error—often before users could articulate them as feature requests. Reviewing support data systematically helped us prioritize improvements that delivered immediate value.

AI works best when it removes friction

The goal was never to showcase AI for its own sake. Success meant helping developers move faster, make fewer mistakes, and feel more confident. By focusing on friction reduction rather than intelligence, AI became a natural extension of the product instead of a distraction.

AI should recommend, not decide

Trust mattered more than sophistication. Positioning AI as a starting point—recomending rather than prescribing—led to higher adoption and engagement. Developers were more willing to use AI when they remained in control, which ultimately improved outcomes and trust

Start where confidence is highest

We intentionally applied AI first to well-understood, repeatable problems. This allowed us to deliver value quickly, establish reliability, and build credibility before expanding into more complex workflows.

Final Takeaway

Final Takeaway

AI doesn’t change the fundamentals of Product Management—it reinforces them. The most effective AI-powered products are built by listening closely to user signals, respecting trust, and focusing relentlessly on outcomes over features.

Keep reading

Product managers' role in making AI/ML systems more relevant

The 2026 AI product strategy guide: How to plan, budget, and build without buying into the hype

How to actually prove AI agent ROI

The Hidden UX of AI - How to build trustworthy AI products: Nina Olding at INDUSTRY 2025