Introduction

Many people know they should be doing experiments and testing their product but when starting out it can be daunting working out where to begin. So, below is my handy guide to experimentation built from scars received on the coal face of experimenting.

This post will take you through a birds-eye tour of the experimentation cycle with the aim of helping you get started and turning your experimentation into a process, something that becomes a normal and natural part of your product management.

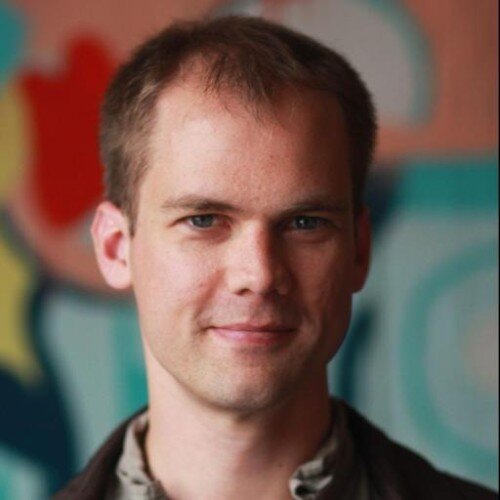

The experimentation cycle consists of the following steps:

- Plan

- Implement

- Monitor

- Act

By closing the loop, the experiment results feed back into a) planning of experiments, b) the product backlog or c) development prioritisation. Similar in many ways to the OODA loop.

Experiments are done to make your product better. If the results of your experiments are not producing action of either new experiments to be run or changes to your product development then something is wrong in either your process or the experiments you are running. Remember, knowing what not to do is also making your product better.

In this article, I’ll begin with planning and designing experiments, move onto implementing experiments along with common tools and techniques and follow with by touching on running and monitoring experiments. To close out, we’ll look at actioning the results of experiments (re-starting the cycle).

Planning experiments

It is tempting to jump into running an experiment without doing any planning. This approach can work but is likely to make it more difficult for you to fully benefit from experimentation and implementing experimentation as a process.

Start with a question

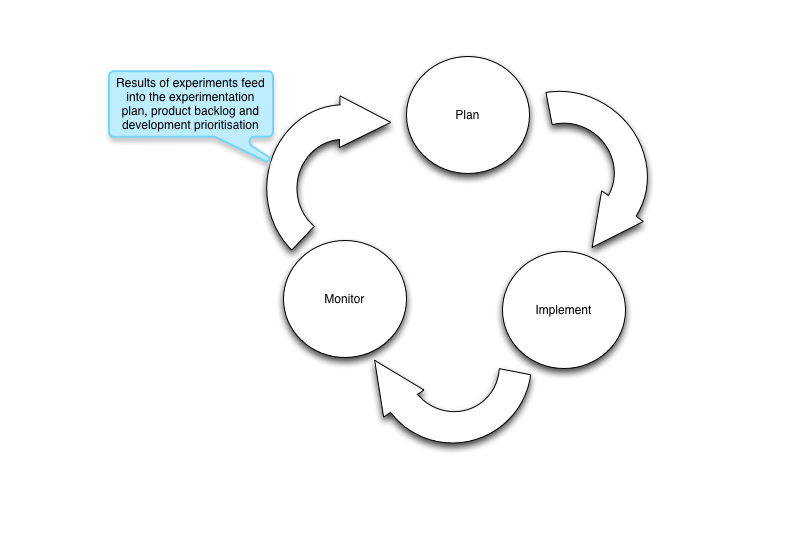

A good starting point for the experiments is to ask a question and then come up with several hypothesis that answer the question. Once you have these hypotheses in place you can then design the experiments that either prove or disprove the hypothesis.

Let us consider an example. Conversion rate is very important for the company and driving that higher is a key objective. So the question becomes:

“Why isn’t the conversion rate on the landing page 30%?”

With the question in mind, let us now create several hypotheses as a starting point for testing:

- The call-to-action should be a red button

- The message is not clear about the value of registering

- There are too many different call-to-actions on the page

Unfortunately, these aren’t very well written hypothesis. Lets bring some rigour to how we specify hypothesis so we fully understand what is going on. The traditional way to structure a hypothesis statement is to use an “if”,”then” approach, for example:

“if I water the plants then the plants will grow”

or structured slightly differently

“if I don’t water the plants then the plants will not grow”

So if we restate the above hypothesis in an if/then format they become:

- if the call-to-action button is red then the number of people registering will go up

- if we change the copy explaining the value of registering then the number of people registering will go up

- if we remove all but one call-to-action on the page then the number of people registering will go up

Now the hypothesis states the independent variable (the bit after the if) and the dependent variable (the bit after the then). These hypotheses can be extended with a “because” clause which sets down why you think the causation exists. For example:

- if the call-to-action button is red then the number of people registering will go up because the red button stands out on the page

- if we change the copy explaining the value of registering then the number of people registering will go up because they will understand the value they get

- if we remove all but one call-to-action on the page then the number of people registering will go up because they are not distracted by multiple call-to-actions

A good hypothesis is one that is testable with an independent variable that can be controlled and a dependent variable that can be measured. It clearly explains what is going to change and what the expected effect of the change is going to be.

A practical test of this is can someone else read and explain back to you what change is going to be made and what the expected effect of the change will be. If they can’t then you need to revisit the hypothesis. You want to be able to hand your hypothesis to someone who can then design the experiments necessary to test the hypothesis.

From hypothesis to experiment

Once you’ve got the hypothesis in place an experiment needs to be created that will test the hypothesis. An experiment will need to allow for the controlled changing of the independent variable (the bit after if) and measure the change (if any) of the dependent variable (the bit after the then).

If you have described your hypothesis well, experiment to test it will be obvious from the hypothesis. For example let us design the experiment for the hypothesis:

if the call-to-action button is red then the number of registrations will go up

The experiment for this hypothesis is a template where the call-to-action button is red, but that is not the full experiment. You can’t be sure that any change in the dependent variable was truly down to changes to the independent variable. The dependent variable changes could have been caused by another independent variable. To ensure you produce a valid result you also need what is often called a control.

The experiment is therefore making controlled changes to the independent variable, measuring the dependent variable and then comparing the results against the control.

So for our example of the red button hypothesis, the experiment would consist of dividing website traffic between the already-existing page template (the control) and an identical page template with a red button (the variant), measuring the number of users who registered, and comparing the number of users who registered from the control and the variant. To account for differences in traffic to each template you should compare the conversion rate (number of registered users divided by the number of unique visitors) as opposed to the absolute registration count.

You are unlikely to have unlimited resources and time to test every possible hypothesis. To help prioritise the hypotheses to test, focus on the hypotheses where the because clause is strongest based on your research and experience.

One test of the hypothesis is not going to answer the question alone. You'll need to come up with multiple different hypothesis as the actual answer may not be obvious. Hence the need for a plan of what experiments you are going to run and what you will do with a positive or negative result. The more time you spend examining and defining the problem (the question and hypothesis) the better your experimentation process will be and the greater value to will achieve.

Final note on the question: focus on the questions that are tied directly to business value or KPIs. Some experiments can be attractive to run because they can be easy or fun to do but the more disciplined you on what questions you are experimenting on the more value you will gain from the experimentation cycle.

Build it into the company

Engage the rest of the company in the experimenting. This helps the rest of the company focus on what the end user is doing or valuing. By engaging the whole company into the process of experimenting it instills a data focused decision making across the board and helps with the HiPPO problem. Changing development priorities based on the results of experiments becomes accepted practice.

Another great benefit of involving the whole company in experimenting is it helps overcome the ego effects of having their work tested. Having your work challenged through testing is often confrontational for people and creates some resistance or disenchantment. However, by having the people create hypotheses and plan to do the experimenting from the beginning helps to change the perception of what is going on and the value behind it.

Implementing experiments

When looking at how the experiments are going to be implemented, consider that you don’t want to be tied into the engineering release cycle and resourcing. This provides the necessary flexibility to implement and monitor experiments on a schedule that produces the best outcomes. In effect you want to limit how coupled you are to engineering priorities and resourcing.

As you implement the experiments record the details of the experiment (name, where, what is it testing, variants), the start and end dates along with the eventual result in an experimental log. This serves several purposes:

- It helps you keep track of what is going,

- You have a historical record of tests run, the results and actions taken on the result

- It serves as a reporting tool for the rest of the company

When first starting out this recording may seem excessive but as experimentation becomes a routine process, the number of on-going and historical experiments will grow rapidly making it difficult to keep everything in order.

How to implement the experiment?

There are two basic types of testing A/B and Multivariate. A/B testing is comparison of 1 or more variants against a control (usually the current implementation) which proves or disproves the hypothesis. Multivariate is comparing what combination of changes prove or disprove the hypothesis.

An A/B test is one that would test the current call-to-action to the red button in hypothesis 1. A multivariate test is one that would test the what combination of red button and copy changes disproves or proves the hypothesis. Multivariate testing can be considered to be multiple A/B tests being all run on the same page at the same time.

Choosing between the two depends on:

- Your traffic

- Time available for testing

- Whether optimizing or looking for the big leap

Multivariate requires more time and traffic to produce a statistically valid result and is often best focused on optimising around a maxima. The simpler A/B testing is better suited to finding better maxima and in situations where traffic and time is constrained. The A/B test will reach statistically valid result sooner than the multivariate testing.

Designing variants

Your variants will be driven by your hypotheses. The more narrow and specific your hypotheses are the greater likelihood that you are optimising around a local maxima.

The local maxima problem

Optimisation of the local maximum is a problem in that you never produce the big improvements. Instead you hover getting small improvements for a lot of effort. An analogy might help to explain better. Consider two hills a small one and a large one. You want to climb a hill to see the plain. If you keep your eyes on the ground and you are near the small hill, that is the one you climb and no matter how much you walk around you aren’t going to get higher. However, if you look up then you see the large hill and so can now get higher.

To avoid the local maximum issue, come up with variants that are widely different. This can extend to completely different layout, style and design of the variants. You are attempting to test different solutions that are as far apart in the problem space as possible in attempt to see the bigger hill.

It is very, very easy to experiment with small changes. It is safe and easier to convince HiPPOs. However, you run the very real risk of optimising over a local maximum. You can drive 1 or 2% improvements but nothing more. Take example homepages, instead of testing different copy test completely different layouts of buttons, copy and styles. These should be distinctly different layouts.

Real-life example

In a drive to increase the conversion rate at PeerIndex we did a series of experiments. The first set of experiments focused on moving buttons around on the page. This produce little improvement in the conversion rate.

Next we did experiments on very different layouts which resulted in a 200% improvement in the conversion rate. The experiments showed the original assumption for the landing page, that we need to explain a lot about PeerIndex to get people to convert, was shown to be wrong. By removing much of the information and keeping the pages simple we made the decision to sign up far easier. You can see the starting landing page in Figure 3 and the end result of the experiments in Figure 4.

Practicalities

Build vs buy

The perennial question: build or buy? You can of course have the engineering team create an A/B testing framework or you can use one of the SaaS tools available. As a product manager I lean towards the buy side as it reduces the impost on the engineering team both up-front and down the track as they don’t have to maintain the in-house system. Additionally, I can run tests outside of the engineering release schedule.

Even with SaaS tool, you will need to get some engineering support to integrate the tool and also to setup your app to allow control by the tool. The extent of integration and engineering effort required depends on the service used but often involves including a JS file in the header of the site or app. Some tools (such as Google Website Optimizer – now part of GA) require you to tag the parts of the templates you are experimenting on while others let you use a WYSIWYG editor in the browser.

If you are testing quite different templates with potentially different dynamic data then you’ll need to create the templates and select the template when the page loads. With in-house you can have the template selection mechanism within the controller. Using SaaS tools, the most effective approach I’ve found is to use the split URL function and have the application select the appropriate template based on URL parameters. Split URL works by directing traffic to two or more different URLs. The difference could be a URL parameter (e.g. ?reg_flow=1) or could be completely different URL (e.g. http://www.example.com/page_1 versus http://www.example.com/page_2).

URLS

URL 1 = http://www.example.com/index?test=1

URL 2 = http://www.example.com/index?test=2

Controller

…..

IF URL_PARAMETER(‘index’) == 1 THEN

//do something

ELSE

//do something else

ENDIF

The same approach can be used to do experiments on different registration flows and the behaviour of different types of functionality. Implementing split URL tests do require engineering support so it is best to plan out the tests you are planning to run so the modifications can be scheduled for engineering delivery.

The challenge with using split URL tests is being able to fire the right goal. If the goal is pageview, it is straightforward. It gets trickier when the goal is an action e.g. successful completion of a tweet, sending an email or submitting a form. Some of the tools captures these actions out-of-the-box or provide a “custom” goal method that you can setup to fire on successful goal completion.

Choosing a SaaS tool

There are a variety of SaaS tools available with 3 notable ones being:

- Google Website Optimizer (now integrated into Google Analytics)

- VisualWebsiteOptimizer from Wingify

- Optimizely.

I’ve used all three and all 3 get the job done. Here are some quick notes on each.

Google Website Optimizer

I found Google Website Optimizer underpowered for the type of experimentation I was doing and it required a lot of manually tagging of the templates in order to run each separate test and was impossible to use to test functionality.

Optimizely

Optimizely includes a WYSIWYG editor (which got indigestion with large web pages). Unfortunately, I found the navigation around the experimental results, editor and dashboard to be confusing resulting in a lot of experiment re-dos and a lot of thinking trying to work out what particular aspect of the service I was looking at.

Visual Website Optimizer

I ended up using Visual Website Optimizer as my primary tool for experimenting as it provided me with the tools to support the experiments I was doing had a straightforward experiment creation process and the UI presented the results in manner that I found clear and easy to navigate around.

Test well

It is easy to try short cuts when experimenting. Unfortunately, shortcuts can easily invalidate the results if you’re careless, making it problematic to draw conclusions from any results you get. Make sure you follow the scientific method.

One common shortcut is to continually change the control. For experiments to avoid observational error the control needs to stay the same through the experiment.

The other major issue is transient traffic, for example from PR. PR (say being on featured on Slashdot) drives a significant amount of transient traffic that may or may not be your target traffic. So your experiments will become overwhelmed by the behaviour of the transient traffic instead of your target traffic leaving you to optimise for the transient traffic that quickly disappears as soon as it came. Dealing with transient traffic it is best to ignore the period during that it occurred and only use the results from either side of it.

Segmentation is very important

Learn to love segmentation because segmentation allows you to understand and optimise for different users. There is no point in getting a 30% conversion rate in the wrong market which masks the fact you've only got a 5% conversion rate in your target market.

For example of what segmentation can provide, I've run tests on segmenting conversion based on country of origin. What this showed was that the conversion rate in our target markets was lower than the overall conversion rate because the conversion rate in other markets was considerably higher masking the lower conversion rate. We are now planning tests aimed specifically at getting the target market conversion rate much higher. If the segmentation hadn't been done, we'd never have known this.

Segmentation can de done a variety of features such as:

- Browser

- Country

- URL parameters (utm codes)

- Time of day

- Day of week

- Visitor type (new versus returning)

- Search keywords

- Mobile device

- OS

Running and monitoring experiments

You’ve got your test plan in place, you’ve implemented the tests now it is on to running the experiments.

Experiments take time

Running the tests will take time even if you’ve got good levels of traffic. The primary reason is to achieve statistical validity. For a test to be statistically valid you need to run it long enough to have enough people to participate in the experiment. How much is enough? Without going into the math involved you can get an idea of how long you’ll need to run an experiment based on traffic levels and complexity of the test using this calculator provided by VisualWebsiteOptimizer.

Also effecting statistical validity of the results is the traffic behaviour. Even if you have enough traffic to get valid results in a single day, is your traffic on that day the same as other days? Was it affected by a marketing push or PR event? You have to take these into consideration when selecting the time the test for run. I prefer to run a test for a minimum of a week so that the experiment is run across the different types of traffic that come by a site on different days of the week and times of day. PR or marketing pushes may require the test to run longer to allow time for the traffic to return to normal.

When you have low traffic you’ll have to run the experiment longer to ensure you have valid results. Here are some good tips on running experiments with low traffic.

Reporting

Reporting serves one and only one purpose; help you determine the next course of action where that is more experiments or changes in product/development prioritisation. If no action is achieved then the report and experiment has been wasted. The reports only need to be as good as needed to draw a conclusion and take action.

The reporting stage is where you get to ask, “why”? Why did I get x instead of y? These questions will lead to new experiments (they are a question remember) that serve to continue the cycle, the process of experimentation. It shouldn’t stop with one experiment or set of experiments. This is also the point at which to examine anomalous results. By anomalous result is one which neither proves nor disproves the hypothesis but one that is perpendicular to what was being tested, one that is so out of expectations should focused on to answer why.

An example of this is a test we did at PeerIndex segmenting the landing page by country. The hypothesis was there would be a difference between the different locations and there was. The anomalous result was one country had a result that was 50% greater the rest. There is no obvious reason for such as difference between that country and the others. In fact it even isn’t a target market.

The importance of negative result

The key outcome of testing is to learn. The result whether positive or negative is immaterial, what is material is that you learnt something from the test. A negative result can and often is more important than a positive result. The negative result is telling you that your fundamental understanding of the user is wrong. The result is you can then go back to the drawing board and use testing to discover what the user wants.

Closing the loop

You’ve designed the experiment, implemented it, ran it and now have the results in a report. The next step is to ask yourself two questions:

- What do these results mean for development prioritisation? and

- Why did I get these results?

The first question allows you to review the development backlog and adjust the prioritisation based on the validate results you got from the experiment. This way improvements in key metrics found in the experiments can be made permanent and are deployed as quickly as possible. For example, if your experiment produces a 100% improvement in conversion rate then you want to get that out as fast as possible.

By asking the question “Why did I get these results?” (or conversely “Why didn’t I get the results I expected?”) you come up with hypotheses that could answer it which you then design experiments to test. For example, say you had done a experiment that showed that visitors from different countries converted at different rates with your target market countries converting lower. The question is then “Why did my target market convert lower?” to which you come up with hypotheses to test.

The actions that are taken based on the results (product prioritisation changes, new experiments) should be recorded in your experimental log. This provides a way of keeping track of the experiments and the eventual outcome. It also provides a handy trail to keep track of how you arrived at any particular experiment.

It is unlikely you’ll answer any question with one experiment. Instead you are more likely to iterate towards the answer by doing repeated experiments. This ongoing experimentation cycle is how you can more rapidly evolve the product to meet KPIs and objectives.

Summary

Ultimately, the goal of experimentation is to achieve a business or product objective. Keep that in mind and you'll do well with experimentation. However, lose sight of that you run the very real risk of short term optimisation that fails to build a strong product or business. It is not about running tests for tests sake or to tick a box in a framework checklist. All the experimentation has to be grounded in achieving a stated objective.

You need to be able to ask yourself “How is this experiment aligned with this objective we are trying to achieve?” and there should be a clear answer e.g “Our objective was to increase revenue. This requires more users paying for the product. We wondered whether the CTA on the home page could be more effective to get user to sign up. One of the tests is the emphasis on the CTA button. This test is one of these – where we evaluated different colours of button.”

Experimentation brings the scientific process to product evolution with the goal of achieving an objective faster. Even if you start out only on experimenting on one area (say landing page conversion) over time you will be running experiments on many different parts of your product at once. Remember the process and tie each experiment into an objective and it will be much easier to keep track of what is going on and ensuring your experiments are evolving your product towards the objectives.

You can find other interesting details and tips on experimentation in this Smashing Magazine article.