Launching new products can be one of the most exciting parts of product management. It involves almost everything in a product life cycle, from market research to acquiring your first set of users. I have been part of many product launches throughout my career at Carwow, Uber, and Dunzo. I believe that product teams that follow a data-driven approach find success over other, more knowledge and instinct-based approaches.

Does data make a difference?

Product management, especially for consumer-facing teams, boils down to a couple of fundamental decisions and constraints:

- How do I grow revenue within an acceptable budget (financial constraints) and service levels (quality constraints)?

- How do I prioritise what my product team works on to be successful in #1 (time constraints)?

While #1 is about product focus and can be partially driven by the overall company strategy outside the sphere of influence of a product manager, #2 is about prioritisation, which should be fully within the product manager's control. This is where a data-driven approach can make a difference during the product launch.

Suppose you can effectively track and monitor data for your newly launched product. In that case, you can make informed decisions on what to focus on now vs in the future, what to communicate to key stakeholders about the success or failure of the launch, and what to (hopefully) iterate and improve through the launch process.

How to be data-driven during launch?

You should not wait to launch to collect data or establish a baseline. Leveraging market research or external data sources can be an excellent start to pre-empt or define goals for your product launch. Even using a competitor's product to gain insights can go a long way. For example, at Dunzo, a delivery app, we evaluated bike taxis as a new category. We spoke with at least 30 drivers working with competitors to understand their utilisation (time on a trip/total time spent online), average trip distance, average trip duration, and earnings per trip were. This, combined with some freely available market research, helped us determine a revenue target for the first eight weeks post-launch.

The process of tracking and monitoring data can be looked at broadly in phases:

- Pre-launch: This is when the decision to launch the product has been finalised up to the launch or go live date. Nothing changes if you plan a beta launch or testing with a limited user group. During this phase, you would have started building out the user journey or flow, and it's important to do a few things:

- Market research – external through a competitive benchmarking exercise and internal through surveys and user feedback. This will highlight the high-level metrics to watch for during launch.

- Map out where tracking is needed in the product. This includes setting up the data pipelines. For example, while building a signup flow, having data events for all steps of the signup journey is necessary to understand where the maximum drop-off in the funnel occurs.

- Define benchmarks or goals for the launch, which can be tracked against actual data. For example, acquiring x number of paid users within four weeks of launch with less than y% returns/cancellations can be a goal for an e-commerce-focused product launch.

- Set up dashboards and live monitoring that can be used during the launch phase. This is important for visibility and to immediately detect any bugs or outages.

- Launch: The business or financial goals determine this phase's duration. It starts when the product goes live for the first set of users until it is deemed fit to be in BAU or maintenance mode. Assuming you have dashboards set up in the pre-launch state, which will help you track how you are trending against your goals, you can also measure initial feedback through customer ratings on the app store or surveys. It's important to classify metrics into two broad categories at this stage clearly:

- Leading metrics – These are metrics that can be tracked without any delays. For example, tracking where users drop off or abandon in a signup flow is a leading indicator and can be actioned immediately.

- Lagging metrics – These are metrics that can take time to measure. For example, profitability or cost metrics can take time to calculate as the reconciliation cycle to estimate all costs would be longer. These metrics help answer more high-level questions on the overall impact of the new product. For instance, what happens if you stop the launch tomorrow, and how does the new product performs relative to other new products?

Determining how you want to capture data for your leading and lagging metrics is also essential. For example, in some instances, you would like to get direct feedback from users via surveys, while for others, you will need to have data logging set up at the right points in the user flow. It's also important to consistently track or capture data daily or weekly during the launch phase.

Beware of the vanity metric!

It can be easy to get caught up in tracking that does not make any difference to the business or the user experience. These metrics are like vanity metrics – they feel good to look at but provide absolutely no value. It's important to assess how the metrics you track influence decision-making and identify the vanity metrics early on during the product launch process. Examples of such metrics can be:

Example 1

A ridesharing company launches a food delivery service and tracks the 'Total Monthly Users.' While this metric is good to look at, it does not indicate user engagement and retention. A better indicator would be an increase in orders per monthly user or an increase in average revenue per user.

Example 2

A three-sided marketplace starts tracking the total transaction volume after launching a new category. This metric is not actionable and does not inform how the product can be improved. Health metrics like completion or fulfilment rates would better indicate what the marketplace's health looks like.

However, vanity metrics could be different based on the type of product. For example, the Monthly Active Users will be relevant for a social media platform but a vanity metric for a marketplace.

A rule of thumb for identifying vanity metrics is returning to the points around focus and prioritisation. If the metrics being tracked do not drive any decisions that influence focus or priorities, then they are probably vanity metrics.

How do you keep your finger on the pulse after the launch?

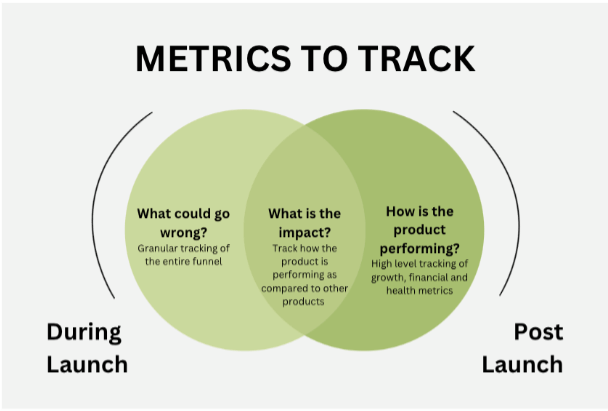

Once the product is stable enough and deemed a success – when there is a significant user base or significant usage (based on the goals defined in the pre-launch phase) within acceptable guardrails – the product typically goes into BAU or maintenance mode. However, it's important to keep tracking metrics at a higher level after the launch phase. A framework for deciding which metrics to track might be as follows:

Some more recommendations

- Leverage external data sources or openly available information – these are good ways to determine how the product is trending compared to industry standards and things such as the impact on the market share, etc. For example, email open rates and click-through rates of signup CTAs. Market research during the pre-launch phase should include this also.

- Loop in the data team to set up tracking and data pipelines before launch. In many cases, getting the data team involved during the pre-launch stage will help spot gaps in your plan and ensure everyone is on the same page regarding data.

- Don't forget to measure the lagging indicators – once some of the buzz and excitement of the launch dies down, it might slip your mind to look at the lagging indicators like the cost of user acquisition, for example. Be proactive in measuring this once the data is available to make an informed decision on what to do with the product to stay ahead of the curve.

Conclusion

All things being equal, a data-driven approach can be the difference between success and failure for product launches. In a way, it helps the product team make smart decisions and focus on the right problems to solve. It helps keep stakeholders informed and excited about the new product while avoiding distractions like vanity metrics.