Measuring the actions real people take on your digital product should be at the heart of your product development efforts. So are you also investing time in the measurement process itself?

Most of us product managers work with services which are already live, so measuring what has happened already is critical to deciding what to improve next. You need reliable 'eyes' on your customer's behaviour, and to spot if new product deployments or new marketing channels have broken any part of your conversion path.

[Tweet "Measuring what has happened already is critical to deciding what to improve next"]

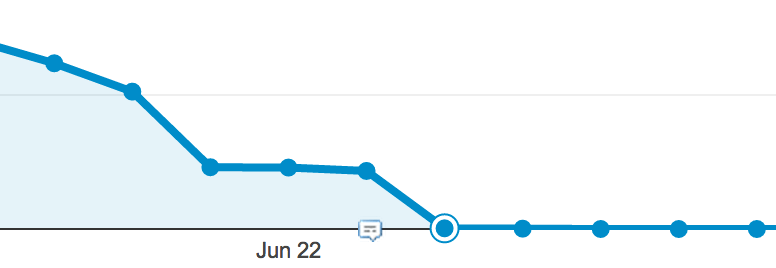

Unfortunately, data collection and reporting does go wrong, and many products I've worked with have been 'blinded' by interruptions in their analytics. It's pretty embarrassing when your boss asks how progress is going on a key goal, and your answer is 'I don't know'.

Here are some tips to make your analytics setup more resilient, and avoid those holes in your data.

1. Pick less than 6 key metrics

Honestly, it's hard enough to gather good data consistently for one metric. Don't complicate this ten-fold by reporting on stuff that no one cares about.

Sit down with your team and agree what metrics you really rely on for your jobs. It's tempting to post information on everything if there's no cost of collection, and figure out what to pay attention to later. But if your product has been going more than 6 months, it should be possible to pick a small set of useful ones – and ignore the rest.

Have a look at the Lean Analytics approach to picking useful, non-vanity metrics.

2. Consider what questions you'd like to ask of your product

Think about how you are going to use the analytics, and what kind of questions you need answered. Write down a list of the top 10 questions, and make sure you are capturing the right data in the right format to answer your question.

For example, if you want to see whether subscribers under the age of 20 are more active users than those over 20, then you need to post the age for every registered user (this is possible using custom dimensions in Google Analytics), and make sure that is a metric accessible in pageview or session reports.

Considering the end reports can also guide which collection and reporting platform to use. Google Analytics may be perfect for that session-by-age-group graphing above, but if you want to look at individual customers you may need KISSMetrics or a custom GA setup.

3. Ask 'how will we track this' for every new feature

Analytics gets out-of-date every time the product changes, so be proactive and consider how a feature will affect your metrics before you release it. In true data-driven companies, you wouldn't be allowed to release a feature until you could measure its impact.

You could adapt your Agile process so that features are not signed off as complete until a couple of weeks after release, and the analytics impact has been checked. Or just make your developers responsible for the end-to-end feature testing.

[Tweet "Ask 'How will we track this?' for every new feature"]

4. Prioritise speed of recovery over speed of setup

Yes, analytics is boring and it's tempting just to get a junior developer or marketing agency to set up your tracking. But when it goes wrong, will you be able to fix it quickly?

Having someone internally who deeply understands how your data collection and reporting works, and ideally can make updates themselves – rather than add it to the development backlog – is a big bonus in times of crisis. And that's coming from someone who runs an analytics consultancy helping clients outsource this setup!

I was a skeptic about the value of tag management software for smaller companies, but I now recommend it as standard – even if the setup is slightly longer. Setting the rules for sending data from your website in a tool like Google Tag Manager means you can make instant changes to data captured, without the risk of disrupting the actual app performance.

5. Test, test, test

The big danger with tracking events on your app is it all happens in the background, and fails silently – so you won't know until you want to get the report.

Have you stepped through each conversion step and checked the appropriate data is being captured?

Real-time reports are essential for testing, but you may need to set up a separate test view or tracker for your test environment if you want to avoid polluting your real customer data.