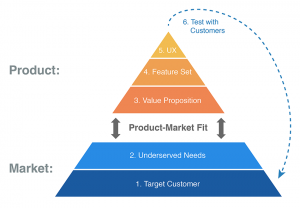

“Product/Market Fit” is a concept that every start-up founder knows is important, but many have trouble achieving or even defining. In The Lean Product Playbook, author Dan Olsen details a six-step process to achieving product/market fit:

- Determine your target customer

- Identify underserved customer needs

- Define your value proposition

- Specify your MVP feature set

- Create your MVP prototype

- Test your MVP with customers

Dan helps CEOs and product leaders build better products and stronger product teams as a hands-on consultant, trainer, and coach. At the most recent Mind The Product conference in London, Dan gave a popular workshop called “How to Achieve Product/Market Fit.” I spoke to Dan recently in advance of Lean Startup Week in San Francisco, where Dan taught a masterclass on product/market fit.

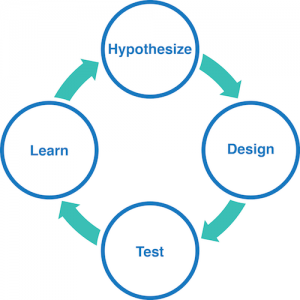

In his book, Dan shows a great image showing a “Hypothesize-Design-Test-Learn” cycle. I asked him about some of the challenges in going from “Test” to “Learn”.

"Going from “Test” to “Learn” requires that you conduct your user tests in a way that generates actionable learning. A big challenge that product teams face is the quality of their user tests. One of the biggest drivers of the quality of a user test is how the moderator asks questions.

For example, let’s suppose that after a customer tries to use a feature in a user test, I ask her, 'That was easy, wasn’t it?' I’ve pretty much set her up to say yes. That is an example of a leading question. Good user testing moderators avoid leading questions like that. A better question in that situation would be, 'Could you please tell me how you felt when you were trying to use that feature?'.

Let’s suppose that after she uses another feature in the user test I ask her, 'Did you like that feature?'. Now, I’m not leading her to say yes (necessarily), but I am pretty much limiting her choices of responses to yes or no. That is a closed question. It is much better to avoid closed questions and ask open-ended questions instead. A better question in that situation would be 'Could you please tell me what you think about that feature?'. Use open-ended, non-leading questions for better learning from user tests."

Dan’s work focuses a lot around on the idea of product/market fit, which can be a huge challenge for start-ups who have little customer signal to know if their ideas “fit” or if they should refocus. What is Dan’s advice is for companies struggling to understand if their product has achieved “fit”?

"The market consists of your target customer and their underserved needs. Your product consists of your value proposition, your feature set, and your user experience design. How well your product assumptions, decisions, and execution resonate with the market determines your level of product/market fit.

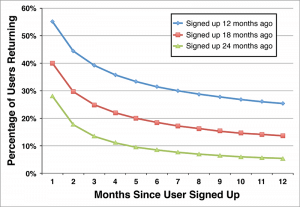

The ultimate measure of product/market fit is retention rate. Retention rate tracks the percentage of users who return to use your product over time. You can get people to sign up for your product with a slick landing page, but if they come back again and again then you can be confident that you are creating value for customers by meeting their needs in a way that is better than other alternatives.

Some retention curves drop to zero, which means you lose all of those users over time because your product isn’t sticky enough. Other retention curves flatten out to a certain value, say 5% of your initial users. That 'terminal value' of your retention curve is a direct measure of product/market fit. Over time, as you learn more about your market and improve your product, you want to see the retention curve for newer cohorts of users move up over time."

The Minimum Viable Product (MVP) is one of the key learning methods described in the Lean Startup. The MVP has been adopted by many start-ups as a way to test business ideas, and has been translated to MVPs for new features and products for the enterprise as well. How can companies best use MVPs to learn what to build?

"I agree that companies should test their assumptions before building their product. In my book, I explain the important differences between qualitative and quantitative learning methods. To highlight the difference, I like to refer to qualitative methods as the 'Oprah' approach and quantitative methods as the 'Spock' approach.

Many people have a preference for Spock, and I understand the allure of trying to “prove” things with numbers. However, the reality is that qualitative methods are much more effective when you define a new product. You learn who your customers are, what their needs are, and how your product might be able to meet those needs better than the existing alternatives. You of course want to take an iterative approach, testing your assumptions with one wave of customers at a time. Interactive prototypes are a great tool for doing this.

As you iterate from wave to wave revising and improving your hypotheses, you want to see fewer negative comments and questions from users and more positive feedback from users. Ideally, to avoid people just being nice to you, you manage to get them to have some skin in the game. You can ask for time: are they willing to schedule a future meeting to give you feedback on your next revision? You can ask for money: are they willing to pay for early access to the product? Or the skin can be reputation: are they willing to refer you to two of their colleagues to solicit their feedback on the product?"

Qualitative data plays an important role in the Lean Product Playbook. How should companies use this data? Should this knowledge be shared among the company or team, or does Dan think it’s the PM’s job to filter it?

"The qualitative, aka ‘Oprah’, approach is very important when you’re building a new product or feature. In general, I believe that information, especially information from customer research, should be made accessible and shared broadly.

That being said, it is valuable for someone to synthesize the learning. If anyone wants to read the full notes or watch the videos from user tests, that’s great. But they shouldn’t have to do that to understand the important takeaways. In my mind, the PM isn’t filtering the raw data but rather trying to pattern match and synthesize across the data to extract knowledge from it."

Finally, does Dan recommend any specific tools for doing work around product/market fit and learning from MVPs?

"Of course, I recommend the frameworks in my book. I’m also a fan of tools that let you easily create and iterate clickable or tappable wireframes, such as Balsamiq. These tools help the team work through their UX design quickly. I’m also a fan of InVision, which lets you take your design mock-ups (from Sketch, Photoshop, or Illustrator) and create clickable or tappable prototypes that are great for user tests."

You can also sign up for The School of Little Data, our free email courses to learn how to use your data to collaborate with your team.