This article is written for product managers, data scientists, and engineering teams, working together on machine learning (ML)-based products. I will cover some growth areas and ideas on how to achieve more impact.

I base this article on my experience as a Lead Product Manager in ML products in an advertising platform and a senior product manager responsible for Search and Recommendations for E-commerce. I accumulated problems and conclusion solutions I met as a product manager.

I will talk about how to describe a task for a team, so a team will better understand product managers’ ideas, I will write about core and related product metrics.

Also, I will pay attention to the data sets and hypothesis testing. And the importance of stakeholders' management.

Machine learning is behind many products such as e-commerce and marketplaces, advertising, and fintech. It can be used to solve:

- Ranking problems. It helps to search in service, e-commerce, email service etc

- Classification problems. It helps to define inappropriate content in advertising, communication channels, etc

- Regression issues. It helps predict how high demand would be in 15 minutes

- Recommendation problems. It helps to create a Discovery section on e-commerce and entertainment services.

Here are some tips on how to build ML-based products within your organisation

Write a story about how a product works

The product or feature can be described through the user's interaction with the interface. The product manager tells what the user could do with the interface, what will happen, clicks the button, etc. In most products, the interface helps product managers explain to engineers what is expected from a product, but it doesn't work that way in ML products.

The ML products don't have an interface, so product managers need to describe the feature differently. They need to write a story to close this gap.

The story should explain how users will interact with the product, what the user's expectations are, and what results should be received in different cases. The story will synchronize an understanding of the product between teams. The story will also help the product manager to think over the solution.

Let's take as an example product recommendations on e-commerce. Recommendations usually have a simple interface like a preview of products. It tells nothing about the algorithms behind recommendations and what was considered to find similar items. So you need to describe specific use cases and answer specific questions:

- Should these recommendations be the same price, same brand, or the same model?

- What is more critical, price, or brand? What properties should be strictly the same, and what could be different? For example, for the washing machine, is it essential to consider whether it is built-in or not

Align on the product metrics

Define target metric

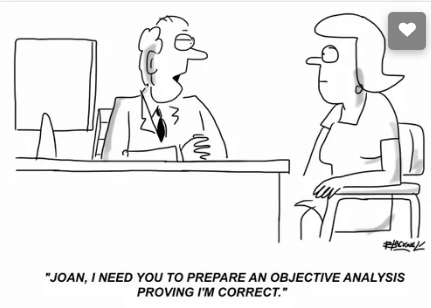

It is essential to align on the core metric: what the team aims to improve. It seems like this is an obvious task, nonetheless, it’s common that team members tend to think about product goals differently.

Sometimes a core metric is supported by a proxy metric. Despite optimizing, the core metric team will work on the proxy metric improvement and measure experiments' impact on a proxy metric.

The proxy metric can help, however, the data about the core metric isn't enough to learn the model or measure experiment impact.

For example, an e-commerce team's goal could be to grow the number of orders. Nonetheless, if the number of orders is low (in particular categories or in general), something up the user funnel could replace the core metric. Such as product pages' views or add to card actions.

What’s the Problem With Proxy Users?

Define related metrics

After clearly defined core metrics, it is essential to define related metrics behaviour. The most useful could be coverage and accuracy, diversity and novelty.

The recommended properties listed below are essential for an experiment to succeed. These metrics can answer why the experiment doesn't provide a significant result.

For example, because the coverage of recommendations is small, there is only one type of recommendation there etc.

Coverage and accuracy

Accuracy shows predictions' correctness, while coverage shows how many cases will get any predictions.

Sometimes not very accurate predictions are better than none. So you may want to increase the coverage and decrease the accuracy or vice versa.

Coverage could be about different things, such as:

- How many customers could get predictions

- Or how many items could be involved in predictions, for example, ads or products

Diversity means the variety of predicted values: how much the predicted items differ. For example, how many of them belong to one type or category.

For example, let's assume that the most popular product subcategory is refrigerators. As a result, product recommendations could consist only of this popular product for new users. However, nobody wants to see refrigerators solely. Therefore, adding other categories of products to the recommendations is more efficient.

Novelty is about showing users items they haven't seen before (bought, viewed, etc.). A team could consider adding new items to predictions to measure user feedback or help users escape from their bubbles.

Use real data sets

It is essential to use real data sets instead of data sets downloaded from somewhere. Real-world information is diverse, sometimes inaccurate and incomplete. Read Data-Driven Product Management by Matt LeMay.

Below are the main problems the team will meet basing understanding of solution without actual data:

Requirements will not take into account a lot of essential data. For example, the face recognition team could forget about children and make solutions only for adults. Or ignore the fact that people could look different.

Also, real-world data could be much more complex. For example, compare the quality and complexity of photos uploaded to classified and Photostock. It would be much harder to recognise objects on classified ones.

Building a solution on actual data also helps estimate the cost of production solutions. Compared to the test data set, in the real-world could appear, problems with access or process data:

- it could be hard to get access

- or, to process it with enough speed or infra costs

Define how to test an idea cheaper

It is expensive to build ML models and create an infrastructure to test the idea.

Teams try to test ideas through experiments done with the minimum amount of development. Building a minimum viable solution is better for two reasons in addition to the general idea that testing a hypothesis quicker is essential:

1) Machine learning learns from data, so an MVP helps to understand what data is needed to be learned and frames what you need to achieve if it is done by hand for the first time

2) MVPs help test that it is technically possible. For example, the backend service could support solutions at a reasonable cost and quality.

For example, the teams' hypothesis is that cross-category recommendations in e-commerce will help users find interesting items like offering an iPhone case for a customer looking for a new iPhone.

A team could go two ways: spend time creating infrastructure and algorithms predicting which categories are complementary. Or make several pairs of product categories like cases for phones and phones. A team could test how customers interact with two categories and gather the data about impact, solution cost and data availability.

The last one is much shorter and allows to evaluate the impact without spending a lot of time.

Do not spend time on technology without a product

Sometimes a team comes up with an idea to test some technology and see what is possible to do with it. This idea could come to product managers, sometimes to data scientists. Nonetheless, the result is the same: time could be spent more efficiently.

The reason is that the same technology application will be very different depending on the problem.

For example, face recognition for documents validation will be drastically different from face recognition for building user photo albums.

Manage stakeholder's expectations

There is hype around machine learning, and the expectations are high. Machine learning is not about making something out of nothing. That is why speaking about expectations is essential.

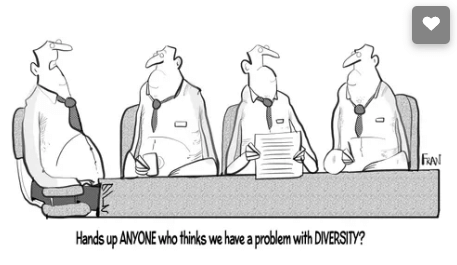

People outside of a team could perceive ML solutions as a black box. As a result, two problems could appear:

- people don't believe that it works and provides impact because they don't understand it.

- people expect magic. For example, a hundred percent accuracy and a hundred percent coverage simultaneously.

I suggest spending time on a high-level explanation of how solutions work, what kind of data to use, the algorithms and ideas placed beyond them, and what correlations have been mentioned.

That helps everybody understand possible ways to improve solutions, build new features, or bug reports better.

I hope considering these tips will help to achieve results through a different approach to ML-based products compared to other products.