Most product teams review goals in hindsight to see whether they have succeeded. But what if you could not just assess what you’ve already done but prioritize your actions along the way? To help do that with a strong focus on outcomes, Product Management coach and author Tim Herbig took ProductTank Amsterdam through a hands-on workshop. He provided context behind the idea of OKRs and surprised some of the other attendees with new thoughts on the matter.

The session was a mixture of both theory and practical exercises for the audience to directly try out the introduced concepts and get feedback from Tim and the community. Tim closed the workshop by discussing questions from the audience. Below is a summary of the workshop’s takeaways:

- There are no right or wrong key results

- Adding the dimension of leading/lagging indicators

- Most common (underestimated) mistakes

- Using output-driven key results to establish a product discovery practice within the company

There are no right or wrong key results

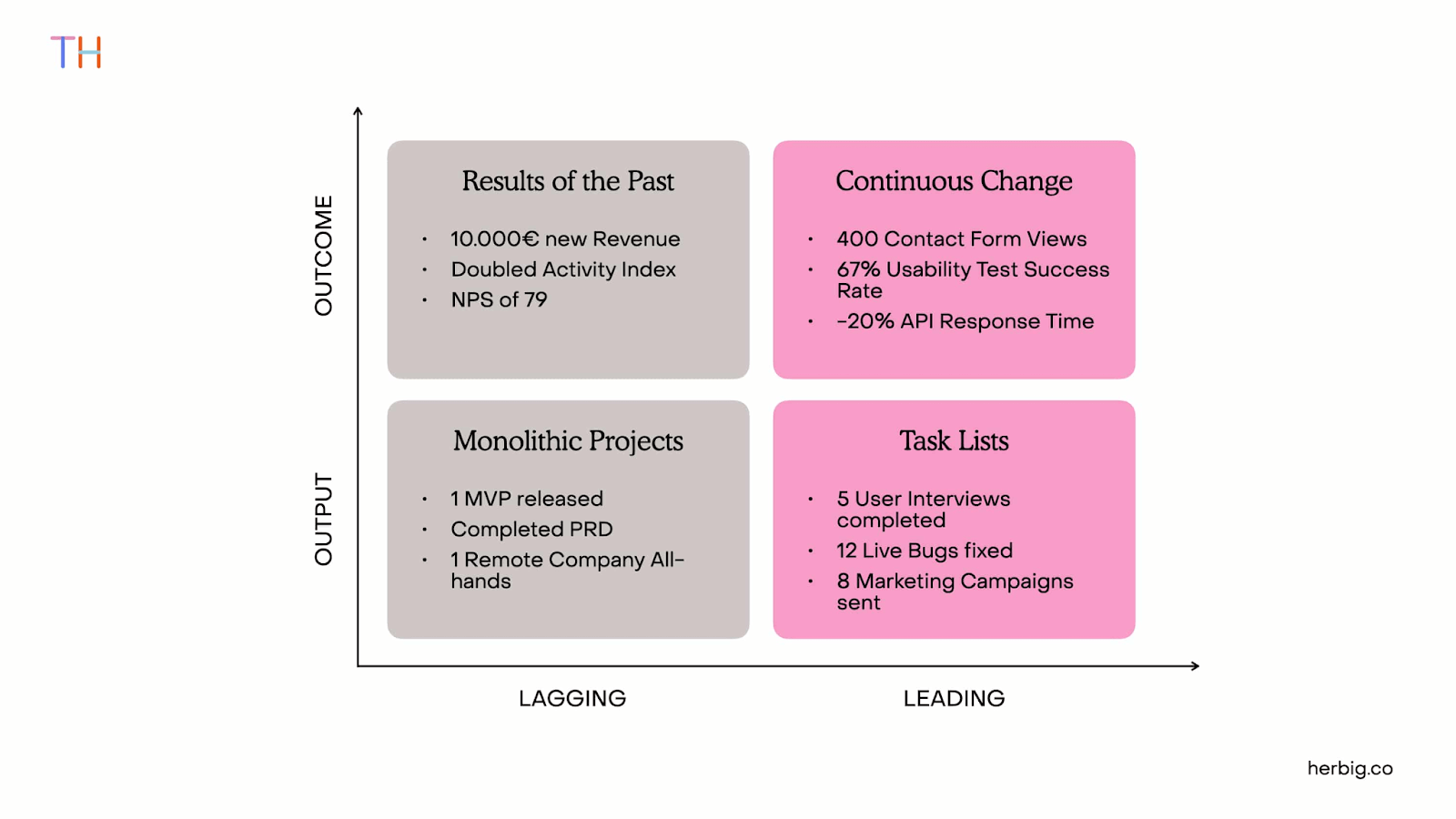

“Outcome over output” is a well-known claim in product management. Output OKRs (e.g. “feature x has been released”) are said to be rather shallow, not inspirational and are likely to block learning opportunities. However, there is nothing wrong with output OKRs per se for measuring success. The team should just be aware of the consequences when they settle on a specific type of key result. The key results should give a holistic picture of the objective, capturing the objective from as many perspectives and levels as possible. This will give more space to come up with the best solution(s), but also requires more confidence and skill from the team.

Based on the consequences of the key results, OKRs can be separated into three different types:

- Impact-ish OKRs: a high-level expression of the company’s strategic priority expressed through a result/metric

- Outcome-ish OKRs: a change in human behaviour that creates an impact on department level. It can be validated through the “how might we” test.

- Output-ish OKRs: a completed activity that aims to create a change in behaviour and has an impact on team level

Adding the dimension of leading/lagging indicators

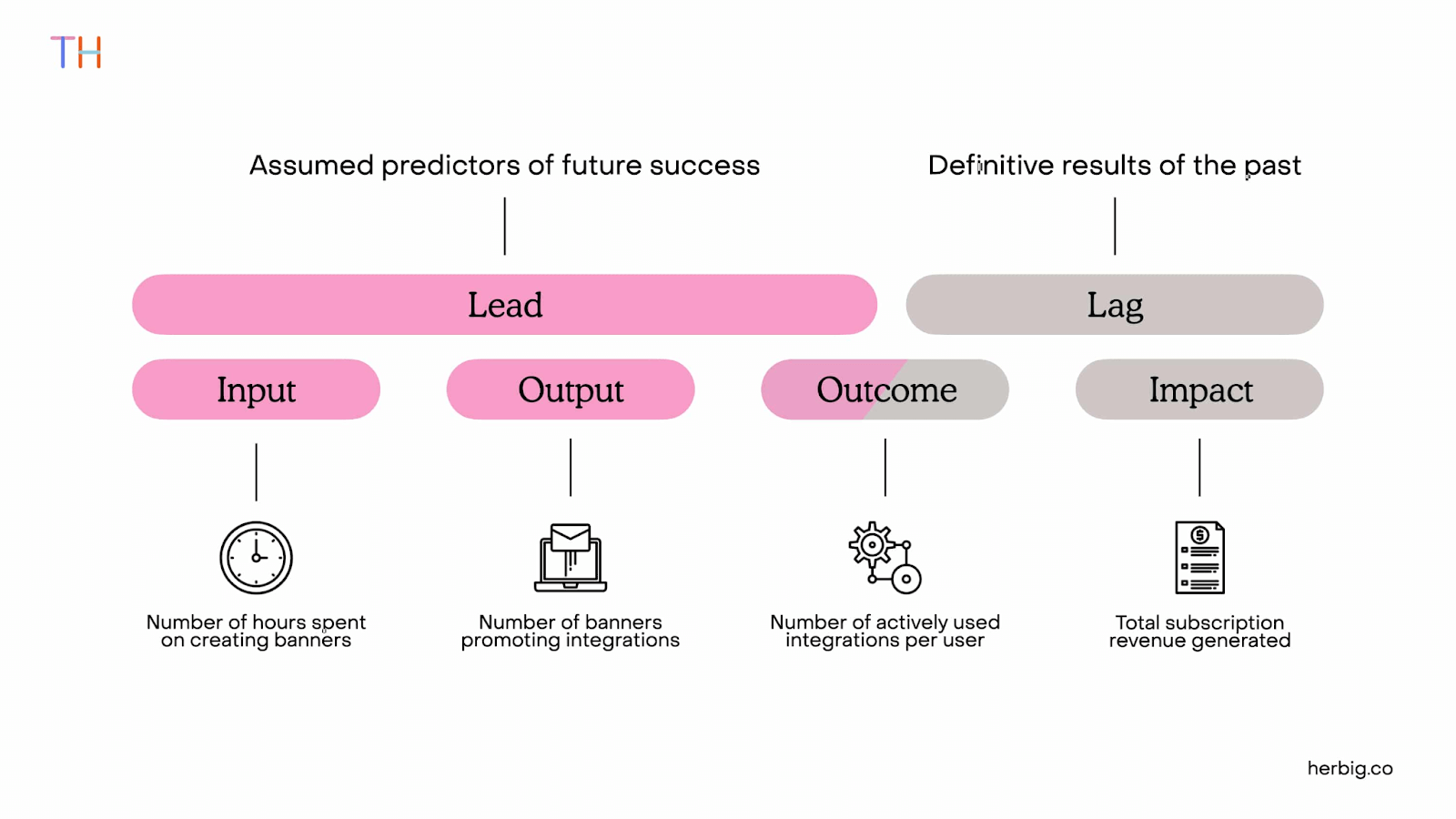

Categorising your OKRs like this will likely not be enough for actually working with them on a day-to-day basis. A second layer should be pointing out the likelihood/certainty of the aimed change. This can be measured by a concept that has been around for a while:

- Leading indicators are more difficult to spot since they require insights from actual user behavior. They are responsive to team actions. They also most likely can be used to predict future success, trading a certain degree of certainty.

- Lagging indicators: are easier to spot (e.g. user growth, revenue) but are often unresponsive and hard to change from a company perspective. Also, they are definitive results of the past.

⚠️ Be conscious about the difference between causation and correlation here: the more leading the indicator is, the less certain you can be about the success of this action. “Outcomes over outputs” generally is a good idea—but not at all cost. It does not help you to pick an outcome indicator just for the sake of it when it leads to a metric of a lagging nature.

⚠️ The nature of the metric highly depends on the context of your company: how your business works, the business cadence, if this metric changes and how often

Most common (underestimated) mistakes

The most common and risky mistake when using these techniques is to ignore a lack of clarity:

- Lack of clarity on the inputs for your OKR definition: You need to have your vision, strategy, customer insights, and roadmap in place to be able to estimate your next contribution to your success. The quality of your OKR inputs highly correlates with the quality of your OKR sets.

- Lack of clarity of the goals of your OKRs: Do you want to measure performance, enable more focus, get the team more autonomy? This can have a huge impact on whether your OKR sets are output or outcome-driven.

Using output-driven key results to establish a product discovery practice within the company

Output key results for Product Discovery are a bit controversial since they are easy to misuse, but can be helpful for teams that struggle with prioritizing discovery work and/or discussing its progress. Metrics like "number of winning prototypes" or "number of opt-ins to user testing" can help make Discovery become a more sustainable practice.

Besides regular (e.g. weekly, bi-weekly) OKR check-ins with the team, make sure to have regular (e.g. quarterly) OKRs retrospectives and make sure that there is a process or person responsible to circle back the learnings to the management team to (potentially) reflect on the way the company measures success altogether.

In summary

In order to be able to assess your OKRs continuously and successfully…

- Mind that there are no right or wrong OKRs, just different dimensions (with relating consequences) that you as a team should be aware of

- Be aware of unclear aspects of your OKR inputs as well as the goals you want to achieve

- Consider using output-driven OKRs as a helpful tool to establish a product discovery practice

- Set up regular check-ins – on team and company level

Helpful resources written by Tim

- Tim‘s article on how to measure the progress of OKRs using leading and lagging indicators: https://herbig.co/leading-lagging-indicators-okrs/ – also includes more information about reverse customer journey mapping

- Tim‘s article on thinking beyond outcomes in OKRs: https://herbig.co/okrs-beyond-outcomes/ – helpful for teams that are still hesitating to start using "wrong" OKRs

- And if you’re curious, here is the recording of the workshop with all the details.